This version of the transform class does a mean normalization: adding an offset to its input so that the difference (per speaker) of the transformed class means from the speaker-independent class means is minimized.

More...

|

| int32 | Dim () const override |

| | Return the dimension of the input and output features. More...

|

| |

| int32 | NumClasses () const override |

| |

| MinibatchInfoItf * | TrainingForward (const CuMatrixBase< BaseFloat > &input, int32 num_chunks, int32 num_spk, const Posterior &posteriors, CuMatrixBase< BaseFloat > *output) const override |

| | This is the function you call in training time, for the forward pass; it adapts the features. More...

|

| |

| virtual void | TrainingBackward (const CuMatrixBase< BaseFloat > &input, const CuMatrixBase< BaseFloat > &output_deriv, int32 num_chunks, int32 num_spk, const Posterior &posteriors, const MinibatchInfoItf &minibatch_info, CuMatrixBase< BaseFloat > *input_deriv) const override |

| | This does the backpropagation, during the training pass. More...

|

| |

| void | Accumulate (const CuMatrixBase< BaseFloat > &input, int32 num_chunks, int32 num_spk, const Posterior &posteriors) override |

| |

| virtual void | TestingForward (const MatrixBase< BaseFloat > &input, const SpeakerStatsItf &speaker_stats, MatrixBase< BaseFloat > *output) override |

| |

| void | Estimate () override |

| |

| | AppendTransform (const AppendTransform &other) |

| |

| DifferentiableTransform * | Copy () const override |

| |

| void | Write (std::ostream &os, bool binary) const override |

| |

| void | Read (std::istream &is, bool binary) override |

| |

| int32 | NumClasses () const |

| | Return the number of classes in the model used for adaptation. More...

|

| |

| virtual void | SetNumClasses (int32 num_classes) |

| | This can be used to change the number of classes. More...

|

| |

| virtual int32 | NumFinalIterations ()=0 |

| | Returns the number of times you have to (call Accumulate() on a subset of data, then call Estimate()) More...

|

| |

| virtual void | Accumulate (int32 final_iter, const CuMatrixBase< BaseFloat > &input, int32 num_chunks, int32 num_spk, const Posterior &posteriors)=0 |

| | This will typically be called sequentially, minibatch by minibatch, for a subset of training data, after training the neural nets, followed by a call to Estimate(). More...

|

| |

| virtual void | Estimate (int32 final_iter)=0 |

| |

| virtual SpeakerStatsItf * | GetEmptySpeakerStats ()=0 |

| |

| virtual void | TestingAccumulate (const MatrixBase< BaseFloat > &input, const Posterior &posteriors, SpeakerStatsItf *speaker_stats) const =0 |

| |

| virtual void | TestingForward (const MatrixBase< BaseFloat > &input, const SpeakerStatsItf &speaker_stats, MatrixBase< BaseFloat > *output) const =0 |

| |

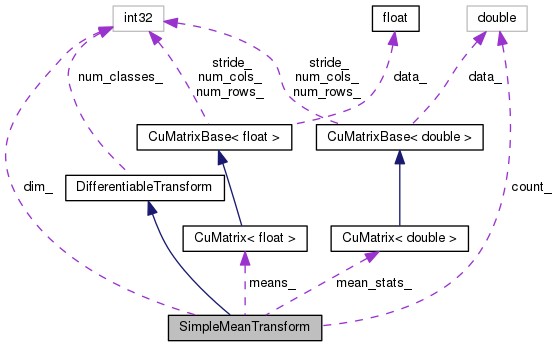

This version of the transform class does a mean normalization: adding an offset to its input so that the difference (per speaker) of the transformed class means from the speaker-independent class means is minimized.

This is like a mean-only fMLLR with fixed (say, unit) covariance model.

Definition at line 524 of file differentiable-transform.h.

This is the function you call in training time, for the forward pass; it adapts the features.

By "training time" here, we assume you are training the 'bottom' neural net, that produces the features in 'input'; if you were not training it, it would be the same as test time as far as this function is concerned.

- Parameters

-

| [in] | input | The original, un-adapted features; these will typically be output by a neural net, the 'bottom' net in our terminology. This will correspond to a whole minibatch, consisting of multiple speakers and multiple sequences (chunks) per speaker. Caution: the order of both the input and output features, and the posteriors, does not consist of blocks, one per sequence, but rather blocks, one per time frame, so the sequences are intercalated. |

| [in] | num_chunks | The number of individual sequences (e.g., chunks of speech) represented in 'input'. input.NumRows() will equal num_sequences times the number of time frames. |

| [in] | num_spk | The number of speakers. Must be greater than one, and must divide num_chunks. The number of chunks per speaker (num_chunks / num_spk) must be the same for all speakers, and the chunks for a speaker must be consecutive. |

| [in] | posteriors | (note: this is a vector of vector of pair<int32,BaseFloat>). This provides, in 'soft-count' form, the class supervision information that is used for the adaptation. posteriors.size() will be equal to input.NumRows(), and the ordering of its elements is the same as the ordering of the rows of input, i.e. the sequences are intercalated. There is no assumption that the posteriors sum to one; this allows you to do things like silence weighting. |

| [out] | output | The adapted output. This matrix should have the same dimensions as 'input'. |

- Returns

- This function returns either NULL or an object of type DifferentiableTransformItf*, which is expected to be given to the function TrainingBackward(). It will store any information that will be needed in the backprop phase.

Implements DifferentiableTransform.

Definition at line 528 of file differentiable-transform.h.

References CuMatrixBase< Real >::CopyFromMat().

534 output->CopyFromMat(input);

Public Member Functions inherited from DifferentiableTransform

Public Member Functions inherited from DifferentiableTransform Static Public Member Functions inherited from DifferentiableTransform

Static Public Member Functions inherited from DifferentiableTransform Protected Attributes inherited from DifferentiableTransform

Protected Attributes inherited from DifferentiableTransform