Matrix for CUDA computing. More...

#include <matrix-common.h>

Public Member Functions | |

| void | CopyCols (const CuMatrixBase< Real > &src, const CuArrayBase< MatrixIndexT > &indexes) |

| Copies column r from column indexes[r] of src. More... | |

| void | AddCols (const CuMatrixBase< Real > &src, const CuArrayBase< MatrixIndexT > &indices) |

| Add column indices[r] of src to column r. More... | |

| void | CopyRows (const CuMatrixBase< Real > &src, const CuArrayBase< MatrixIndexT > &indexes) |

| Copies row r from row indexes[r] of src. More... | |

| void | CopyRows (const CuArrayBase< const Real *> &src) |

| Copies row r of this matrix from an array of floats at the location given by src[r], where src[r] is assumed to be obtained from the RowData() function of another CuMatrix, or from CuVector::Data() (the point is: the data it points to should be on the GPU if we're using a GPU, and on a CPU otherwise). More... | |

| void | CopyToRows (const CuArrayBase< Real *> &dst) const |

| For each row r of this matrix, copies it to the array of floats at the location given by dst[r], where dst[r] is assumed to be obtained from the RowData() function of another CuMatrix, or from CuVector::Data() (i.e. More... | |

| void | AddRows (Real alpha, const CuMatrixBase< Real > &src, const CuArrayBase< MatrixIndexT > &indexes) |

| Does for each row r, this.Row(r) += alpha * src.row(indexes[r]). More... | |

| void | MulRows (const CuMatrixBase< Real > &src, const CuArrayBase< MatrixIndexT > &indexes) |

| Does for each row r, this.Row(r) *= alpha * src.row(indexes[r]), where '*=' is elementwise multiplication. More... | |

| void | AddRows (Real alpha, const CuArrayBase< const Real *> &src) |

| Does for each row r, this.Row(r) += alpha * src[r], treating src[r] as the beginning of a region of memory representing a vector of floats, of the same length as this.NumCols(). More... | |

| void | AddToRows (Real alpha, const CuArrayBase< MatrixIndexT > &indexes, CuMatrixBase< Real > *dst) const |

| For each row i of *this, adds this->Row(i) to dst->Row(indexes(i)) if indexes(i) >= 0, else do nothing. More... | |

| void | AddToRows (Real alpha, const CuArrayBase< Real *> &dst) const |

| For each row r of this matrix, adds it (times alpha) to the array of floats at the location given by dst[r], where dst[r] is assumed to be obtained from the RowData() function of another CuMatrix, or from CuVector::Data() (i.e. More... | |

| void | SumColumnRanges (const CuMatrixBase< Real > &src, const CuArrayBase< Int32Pair > &indexes) |

| For each row r of this and for each column c, sets (*this)(r, c) to the sum src(r, j), where j ranges from indexes[c].first through indexes[c].second - 1. More... | |

| void | AddRowRanges (const CuMatrixBase< Real > &src, const CuArrayBase< Int32Pair > &indexes) |

| For each row r of this and for each column c, do (*this)(r, c) += src(j, c), where j ranges from indexes[r].first through indexes[r].second - 1. More... | |

| void | AddToDiag (Real value) |

| Adds "value" to the diagonal elements of the matrix. More... | |

| MatrixIndexT | NumRows () const |

| Dimensions. More... | |

| MatrixIndexT | NumCols () const |

| MatrixIndexT | Stride () const |

| ::MatrixDim | Dim () const |

| Real | FrobeniusNorm () const |

| bool | IsUnit (Real tol=0.001) const |

| bool | ApproxEqual (const CuMatrixBase< Real > &other, float tol=0.01) const |

| True if ((*this)-other).FrobeniusNorm() <= tol * this->FrobeniusNorm() More... | |

| MatrixIndexT | SizeInBytes () const |

| Get size of matrix in bytes. More... | |

| template<typename OtherReal > | |

| void | CopyFromMat (const MatrixBase< OtherReal > &src, MatrixTransposeType trans=kNoTrans) |

| void | CopyFromGeneralMat (const GeneralMatrix &src, MatrixTransposeType trans=kNoTrans) |

| void | CopyFromMat (const MatrixBase< Real > &src, MatrixTransposeType trans=kNoTrans) |

| void | CopyFromSp (const CuSpMatrix< Real > &M) |

| template<typename OtherReal > | |

| void | CopyFromTp (const CuTpMatrix< OtherReal > &M, MatrixTransposeType trans=kNoTrans) |

| void | CopyRangeFromMatClamped (const CuMatrixBase< Real > &src, int32_t start_range, int32_t end_range, int32_t clamp_low, int32_t clamp_high) |

| template<typename OtherReal > | |

| void | CopyFromMat (const CuMatrixBase< OtherReal > &M, MatrixTransposeType trans=kNoTrans) |

| template<typename OtherReal > | |

| void | CopyToMat (MatrixBase< OtherReal > *dst, MatrixTransposeType trans=kNoTrans) const |

| void | CopyRowsFromVec (const CuVectorBase< Real > &v) |

| This function has two modes of operation. More... | |

| void | CopyRowsFromVec (const VectorBase< Real > &v) |

| Version of CopyRowsFromVec() that takes a CPU-based vector. More... | |

| void | CopyColsFromVec (const CuVectorBase< Real > &v) |

| Copies vector into matrix, column-by-column. More... | |

| void | CopyColFromVec (const CuVectorBase< Real > &v, const MatrixIndexT col) |

| Copy vector into specific column of matrix. More... | |

| void | Sigmoid (const CuMatrixBase< Real > &src) |

| Set each element to the sigmoid of the corresponding element of "src": element by element, x = 1 / (1 + exp(-x)) More... | |

| void | Heaviside (const CuMatrixBase< Real > &src) |

| Set each element to the Heaviside function of the corresponding element of "src", which we define as the function (x > 0 ? 1.0 : 0.0) [note: in general, there are different ways to deal with the situation when x==0. More... | |

| void | Exp (const CuMatrixBase< Real > &src) |

| void | Log (const CuMatrixBase< Real > &src) |

| void | Pow (const CuMatrixBase< Real > &src, Real power) |

| void | PowAbs (const CuMatrixBase< Real > &src, Real power, bool include_sign=false) |

| Apply power to the absolute value of each element. More... | |

| void | Floor (const CuMatrixBase< Real > &src, Real floor_val) |

| void | Ceiling (const CuMatrixBase< Real > &src, Real ceiling_val) |

| void | ExpLimited (const CuMatrixBase< Real > &src, Real lower_limit, Real upper_limit) |

| This is equivalent to running: Floor(src, lower_limit); Ceiling(src, upper_limit); Exp(src) More... | |

| void | ExpSpecial (const CuMatrixBase< Real > &src) |

| For each element x of the matrix, set it to (x < 0 ? exp(x) : x + 1). More... | |

| void | SoftMaxPerRow (const CuMatrixBase< Real > &src) |

| Softmax nonlinearity Y = Softmax(X) : Yij = e^Xij / sum_k(e^Xik), done to each row, with attention to avoiding overflow or underflow. More... | |

| void | LogSoftMaxPerRow (const CuMatrixBase< Real > &src) |

| LogSoftmax nonlinearity Y = LogSoftmax(X) : Yij = Xij - log(sum_k(e^Xik)), done to each row, with attention to avoiding overflow or underflow. More... | |

| void | SoftHinge (const CuMatrixBase< Real > &src) |

| Apply the function y = log(1 + exp(x)), to each element. More... | |

| void | GroupPnorm (const CuMatrixBase< Real > &src, Real pow) |

| Apply the function y(i) = (sum_{j = i*G}^{(i+1)*G-1} x_j ^ (power)) ^ (1 / p) where G = x.NumCols() / y.NumCols() must be an integer. More... | |

| void | DiffGroupPnorm (const CuMatrixBase< Real > &in_value, const CuMatrixBase< Real > &out_value, const CuMatrixBase< Real > &out_deriv, Real power) |

| Differentiate backward through the GroupPnorm function. More... | |

| void | GroupMax (const CuMatrixBase< Real > &src) |

| Apply the function y(i) = (max_{j = i*G}^{(i+1)*G-1} x_j where G = x.NumCols() / y.NumCols() must be an integer. More... | |

| void | GroupMaxDeriv (const CuMatrixBase< Real > &input, const CuMatrixBase< Real > &output) |

| Calculate derivatives for the GroupMax function above, where "input" is the input to the GroupMax function above (i.e. More... | |

| void | ParametricRelu (const CuMatrixBase< Real > &src, const CuVectorBase< Real > &alpha, const CuVectorBase< Real > &beta) |

| Compute the parametric rectified linear unit function; element by element, *this = src * (src > 0 ? alpha : beta) More... | |

| void | DiffParametricRelu (const CuMatrixBase< Real > &value, const CuMatrixBase< Real > &diff, const CuVectorBase< Real > &alpha, const CuVectorBase< Real > &beta) |

| Differentiate backward through the parametric relu function. More... | |

| void | Tanh (const CuMatrixBase< Real > &src) |

| Compute the hyperbolic tangent (tanh) function; element by element, *this = tanh(src). More... | |

| void | DiffSigmoid (const CuMatrixBase< Real > &value, const CuMatrixBase< Real > &diff) |

| Differentiate backward through the sigmoid function. More... | |

| void | DiffTanh (const CuMatrixBase< Real > &value, const CuMatrixBase< Real > &diff) |

| Differentiate backward through the tanh function. More... | |

| void | DiffSoftmaxPerRow (const CuMatrixBase< Real > &value, const CuMatrixBase< Real > &diff) |

| Differentiate backward through the softmax function. More... | |

| void | DiffLogSoftmaxPerRow (const CuMatrixBase< Real > &out_value, const CuMatrixBase< Real > &out_deriv) |

| Differentiate backward through the log softmax function. More... | |

| void | DiffXent (const CuArrayBase< int32 > &tgt, CuVector< Real > *log_post_tgt) |

| Differentiate the block [softmax+cross-entropy] : dE/da = posterior_mat - target_mat, 'E' is error function, 'a' is activation on softmax input. More... | |

| void | Cholesky (CuMatrixBase< Real > *inv_cholesky=NULL) |

| This function does sets *this to the Cholesky factor of *this (i.e. More... | |

| void | SymInvertPosDef () |

| Inversion for positive definite symmetric matrices. More... | |

| void | ApplyPow (Real power) |

| void | ApplyPowAbs (Real power, bool include_sign=false) |

| void | ApplyHeaviside () |

| void | ApplyFloor (Real floor_val) |

| void | ApplyCeiling (Real ceiling_val) |

| void | ApplyExp () |

| void | ApplyExpLimited (Real lower_limit, Real upper_limit) |

| void | ApplyExpSpecial () |

| void | ApplySoftMaxPerRow () |

| void | ApplyLogSoftMaxPerRow () |

| void | ApplyLog () |

| void | FindRowMaxId (CuArray< int32 > *id) const |

| Find the id of the maximal element for each row (resizes the 'id' array to the appropriate size). More... | |

| void | SetZero () |

| Math operations, some calling kernels. More... | |

| void | Set (Real value) |

| void | Add (Real value) |

| void | SetZeroAboveDiag () |

| Zeroes all elements for which col > row. More... | |

| void | Scale (Real value) |

| void | MulElements (const CuMatrixBase< Real > &A) |

| Multiply two matrices elementwise: C = C .* A. More... | |

| void | DivElements (const CuMatrixBase< Real > &A) |

| Divide two matrices elementwise: C = A ./ A. More... | |

| void | Max (const CuMatrixBase< Real > &A) |

| Do, elementwise, *this = max(*this, A). More... | |

| void | Min (const CuMatrixBase< Real > &A) |

| Do, elementwise, *this = min(*this, A). More... | |

| void | MulColsVec (const CuVectorBase< Real > &scale) |

| scale i'th column by scale[i] More... | |

| void | MulRowsVec (const CuVectorBase< Real > &scale) |

| scale i'th row by scale[i] More... | |

| void | MulRowsGroupMat (const CuMatrixBase< Real > &src) |

| divide each row into src.NumCols() groups, and then scale i'th row's jth group of elements by src[i, j]. More... | |

| void | DivRowsVec (const CuVectorBase< Real > &div) |

| divide i'th row by scale[i] More... | |

| void | InvertElements () |

| invert the matrix by elements. More... | |

| void | AddMat (Real alpha, const CuMatrixBase< Real > &A, MatrixTransposeType trans=kNoTrans) |

| *this += alpha * A More... | |

| void | AddSmat (Real alpha, const CuSparseMatrix< Real > &A, MatrixTransposeType trans=kNoTrans) |

| *this += alpha * A. More... | |

| void | AddSmatMat (Real alpha, const CuSparseMatrix< Real > &A, MatrixTransposeType transA, const CuMatrixBase< Real > &B, Real beta) |

| (*this) = alpha * op(A) * B + beta * (*this), where A is sparse. More... | |

| void | AddMatSmat (Real alpha, const CuMatrixBase< Real > &A, const CuSparseMatrix< Real > &B, MatrixTransposeType transB, Real beta) |

| (*this) = alpha * A * op(B) + beta * (*this), where B is sparse and op(B) is either B or trans(B) depending on the 'transB' argument. More... | |

| void | AddToElements (Real alpha, const CuArrayBase< int32 > &elements) |

| This is a rather special purpose function; we might generalize it later by adding a transpose-type option. More... | |

| void | AddMatBlocks (Real alpha, const CuMatrixBase< Real > &A, MatrixTransposeType trans=kNoTrans) |

| This function is like AddMat (it does *this += alpha * src), except that it supports cases where *this and src have different dimension. More... | |

| void | AddVecToCols (Real alpha, const CuVectorBase< Real > &col, Real beta=1.0) |

| (for each column c of *this), c = alpha * col + beta * c More... | |

| void | AddVecToRows (Real alpha, const CuVectorBase< Real > &row, Real beta=1.0) |

| (for each row r of *this), r = alpha * row + beta * r More... | |

| void | AddMatMat (Real alpha, const CuMatrixBase< Real > &A, MatrixTransposeType transA, const CuMatrixBase< Real > &B, MatrixTransposeType transB, Real beta) |

| C = alpha * A(^T)*B(^T) + beta * C. More... | |

| void | AddVecVec (Real alpha, const CuVectorBase< Real > &x, const CuVectorBase< Real > &y) |

| A = alpha * x * y^T + A . More... | |

| void | SetMatMatDivMat (const CuMatrixBase< Real > &A, const CuMatrixBase< Real > &B, const CuMatrixBase< Real > &C) |

| *this = a * b / c (by element; when c = 0, *this = a) *this can be an alias of a, b or c safely and get expected result. More... | |

| void | SymAddMat2 (const Real alpha, const CuMatrixBase< Real > &M, MatrixTransposeType transA, Real beta) |

| *this = beta * *this + alpha * M M^T, for symmetric matrices. More... | |

| void | AddMatBlock (Real alpha, const CuMatrixBase< Real > &A, MatrixTransposeType transA, const CuBlockMatrix< Real > &B, MatrixTransposeType transB, Real beta) |

| This function is like AddMatMat but for where the second argument is of type CuBlockMatrix (a block-diagonal matrix of blocks). More... | |

| void | AddDiagVecMat (const Real alpha, const CuVectorBase< Real > &v, const CuMatrixBase< Real > &M, MatrixTransposeType transM, Real beta=1.0) |

| *this = beta * *this + alpha * diag(v) * M [or M^T]. More... | |

| void | AddMatDiagVec (const Real alpha, const CuMatrixBase< Real > &M, MatrixTransposeType transM, CuVectorBase< Real > &v, Real beta=1.0) |

| void | AddMatMatElements (const Real alpha, const CuMatrixBase< Real > &A, const CuMatrixBase< Real > &B, const Real beta) |

| *this = beta * *this + alpha * A .* B (.* element by element multiplication) More... | |

| void | AddMatSp (const Real alpha, const CuMatrixBase< Real > &A, MatrixTransposeType transA, const CuSpMatrix< Real > &B, const Real beta) |

| this <– beta*this + alpha*A*B More... | |

| void | AddSpMat (const Real alpha, const CuSpMatrix< Real > &A, const CuMatrixBase< Real > &B, MatrixTransposeType transB, const Real beta) |

| this <– beta*this + alpha*SpA*B More... | |

| void | AddTpMat (const Real alpha, const CuTpMatrix< Real > &A, MatrixTransposeType transA, const CuMatrixBase< Real > &B, MatrixTransposeType transB, const Real beta) |

| this <– beta*this + alpha*A*B. More... | |

| void | AddMatTp (const Real alpha, const CuMatrixBase< Real > &A, MatrixTransposeType transA, const CuTpMatrix< Real > &B, MatrixTransposeType transB, const Real beta) |

| this <– beta*this + alpha*A*B. More... | |

| void | CopyFromBlock (const CuBlockMatrix< Real > &B, MatrixTransposeType trans=kNoTrans) |

| void | CopyLowerToUpper () |

| void | CopyUpperToLower () |

| CuSubMatrix< Real > | Range (const MatrixIndexT row_offset, const MatrixIndexT num_rows, const MatrixIndexT col_offset, const MatrixIndexT num_cols) const |

| CuSubMatrix< Real > | RowRange (const MatrixIndexT row_offset, const MatrixIndexT num_rows) const |

| CuSubMatrix< Real > | ColRange (const MatrixIndexT col_offset, const MatrixIndexT num_cols) const |

| const CuSubVector< Real > | Row (MatrixIndexT i) const |

| CuSubVector< Real > | Row (MatrixIndexT i) |

| CuValue< Real > | operator() (MatrixIndexT r, MatrixIndexT c) |

| Real | operator() (MatrixIndexT r, MatrixIndexT c) const |

| Real | Sum () const |

| Real | Max () const |

| Real | Min () const |

| Real | Trace (bool check_square=true) const |

| Return the trace. If check_square = true, will crash if matrix is not square. More... | |

| void | SetRandn () |

| void | SetRandUniform () |

| void | Write (std::ostream &os, bool binary) const |

| void | AddElements (Real alpha, const std::vector< MatrixElement< Real > > &input) |

| void | AddElements (Real alpha, const CuArrayBase< Int32Pair > &indexes, const Real *input) |

| void | Lookup (const std::vector< Int32Pair > &indexes, Real *output) const |

| void | Lookup (const CuArrayBase< Int32Pair > &indexes, Real *output) const |

| void | EqualElementMask (const CuMatrixBase< Real > &mat, CuMatrix< Real > *mask) const |

| const Real * | RowData (MatrixIndexT r) const |

| Get raw row pointer (const). More... | |

| Real * | RowData (MatrixIndexT r) |

| Get raw row pointer. More... | |

| const Real * | Data () const |

| Return data pointer (const). More... | |

| Real * | Data () |

| Return data pointer. More... | |

| const MatrixBase< Real > & | Mat () const |

| MatrixBase< Real > & | Mat () |

Protected Member Functions | |

| CuMatrixBase () | |

| CuMatrixBase (Real *data, MatrixIndexT num_rows, MatrixIndexT num_cols, MatrixIndexT stride) | |

| This constructor takes the #rows, #cols and stride; it's called from the constructor of CuSubMatrix. More... | |

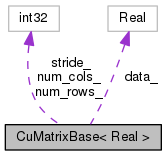

Protected Attributes | |

| Real * | data_ |

| GPU data pointer (or regular matrix data pointer,. More... | |

| MatrixIndexT | num_cols_ |

| MatrixIndexT | num_rows_ |

| MatrixIndexT | stride_ |

Private Member Functions | |

| KALDI_DISALLOW_COPY_AND_ASSIGN (CuMatrixBase) | |

Matrix for CUDA computing.

Does the computation on the CUDA card when CUDA is compiled in and we have a suitable GPU (CuDevice::Instantiate().Enabled() == true); otherwise, does it on the CPU.

Definition at line 69 of file matrix-common.h.

|

inlineprotected |

Definition at line 767 of file cu-matrix.h.

|

inlineprotected |

This constructor takes the #rows, #cols and stride; it's called from the constructor of CuSubMatrix.

Definition at line 771 of file cu-matrix.h.

| void Add | ( | Real | value | ) |

Definition at line 582 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), BackpropTruncationComponent::Backprop(), TanhComponent::Backprop(), LstmNonlinearityComponent::ConsolidateMemory(), kaldi::CuCompressedMatrixTestNonnegative(), kaldi::CuCompressedMatrixTestSymmetric(), GeneralDropoutComponent::GetMemo(), main(), kaldi::MeanVariance(), DropoutMaskComponent::Propagate(), DropoutComponent::Propagate(), ClipGradientComponent::RepairGradients(), TanhComponent::StoreStats(), kaldi::TestCuMatrixCompObjfAndDeriv(), kaldi::nnet3::TestSimpleComponentPropagateProperties(), kaldi::UnitTestCuMatrixAdd(), kaldi::UnitTestCuMatrixAdd2(), kaldi::UnitTestCuMatrixEqualElementMask(), kaldi::UnitTestCuMatrixObjfDeriv(), kaldi::UnitTestCuMatrixSetRandUniform(), and kaldi::UnitTestCuMatrixTraceMatMat().

| void AddCols | ( | const CuMatrixBase< Real > & | src, |

| const CuArrayBase< MatrixIndexT > & | indices | ||

| ) |

Add column indices[r] of src to column r.

As a special case, if indexes[i] == -1, skip column i indices.size() must equal this->NumCols(), and src.NumRows() must equal this.NumRows()

Definition at line 2701 of file cu-matrix.cc.

Referenced by Convolutional1dComponent::Backprop(), ConvolutionalComponent::BackpropagateFnc(), ConvolutionComponent::InderivPatchesToInderiv(), and MaxpoolingComponent::InderivPatchesToInderiv().

| void AddDiagVecMat | ( | const Real | alpha, |

| const CuVectorBase< Real > & | v, | ||

| const CuMatrixBase< Real > & | M, | ||

| MatrixTransposeType | transM, | ||

| Real | beta = 1.0 |

||

| ) |

*this = beta * *this + alpha * diag(v) * M [or M^T].

The same as adding M but scaling each row M_i by v(i).

Definition at line 1382 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), kaldi::nnet3::attention::ApplyScalesToInput(), kaldi::nnet3::attention::ApplyScalesToOutput(), HiddenSoftmax::BackpropagateFnc(), MultiBasisComponent::BackpropagateFnc(), OnlinePreconditioner::ComputeWt1(), OnlineNaturalGradient::ComputeWt1(), kaldi::cu::DiffNormalizePerRow(), CuMatrixBase< float >::DiffSoftmaxPerRow(), MultiBasisComponent::PropagateFnc(), and kaldi::TestCuMatrixAddDiagVecMat().

| void AddElements | ( | Real | alpha, |

| const std::vector< MatrixElement< Real > > & | input | ||

| ) |

Definition at line 3277 of file cu-matrix.cc.

Referenced by OnlinePreconditioner::InitOrthonormalSpecial(), OnlineNaturalGradient::InitOrthonormalSpecial(), CuMatrixBase< float >::operator()(), DiscriminativeComputation::ProcessPosteriors(), and kaldi::UnitTestCuMatrixAddElements().

| void AddElements | ( | Real | alpha, |

| const CuArrayBase< Int32Pair > & | indexes, | ||

| const Real * | input | ||

| ) |

Definition at line 3311 of file cu-matrix.cc.

| void AddMat | ( | Real | alpha, |

| const CuMatrixBase< Real > & | A, | ||

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

*this += alpha * A

Definition at line 954 of file cu-matrix.cc.

Referenced by RestrictedAttentionComponent::Add(), CuRand< float >::AddGaussNoise(), GeneralMatrix::AddToMat(), CuMatrixBase< float >::ApplyLog(), CuMatrixBase< float >::ApproxEqual(), kaldi::nnet3::attention::AttentionBackward(), kaldi::nnet3::attention::AttentionForward(), SigmoidComponent::Backprop(), Splice::BackpropagateFnc(), AveragePoolingComponent::BackpropagateFnc(), MultiBasisComponent::BackpropagateFnc(), LstmNonlinearityComponent::ConsolidateMemory(), kaldi::nnet3::ConstrainOrthonormalInternal(), kaldi::CuCompressedMatrixTestNonnegative(), kaldi::CuCompressedMatrixTestSymmetric(), CuMatrixBase< float >::DiffLogSoftmaxPerRow(), Xent::Eval(), Mse::Eval(), NnetComputer::ExecuteCommand(), AdditiveNoiseComponent::Propagate(), ClipGradientComponent::RepairGradients(), RestrictedAttentionComponent::StoreStats(), kaldi::nnet3::attention::TestAttentionForwardBackward(), NoOpTransform::TrainingBackward(), kaldi::UnitTestCuMatrixAddMat(), kaldi::UnitTestCuMatrixAddMatBlocks1(), kaldi::UnitTestCuMatrixAddMatBlocks1Trans(), kaldi::UnitTestCuMatrixAddMatBlocks2(), kaldi::UnitTestCuMatrixAddMatDiagVec(), kaldi::UnitTestCuMatrixAddMatMatElements(), kaldi::UnitTestLstmNonlinearity(), and kaldi::nnet3::UnitTestNnetInputDerivatives().

| void AddMatBlock | ( | Real | alpha, |

| const CuMatrixBase< Real > & | A, | ||

| MatrixTransposeType | transA, | ||

| const CuBlockMatrix< Real > & | B, | ||

| MatrixTransposeType | transB, | ||

| Real | beta | ||

| ) |

This function is like AddMatMat but for where the second argument is of type CuBlockMatrix (a block-diagonal matrix of blocks).

Definition at line 3205 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), and kaldi::UnitTestCuBlockMatrixAddMatBlock().

| void AddMatBlocks | ( | Real | alpha, |

| const CuMatrixBase< Real > & | A, | ||

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

This function is like AddMat (it does *this += alpha * src), except that it supports cases where *this and src have different dimension.

There are two allowed cases:

(1) *this is larger than src; we do a broadcasting operation. *this must have NumRows() == a * src.NumRows() and NumCols() == b * src.NumCols() for integer a >= 1, b >= 1. *this will be treated as a being made up of of blocks with the same size as src, and to each block we'll add alpha * src. This case does not support trans == kTrans.

(2) *this is smaller than src; we sum. src.NumRows() must == a * this->NumRows(), and src.NumCols() must == b * this->NumCols(), for a >= 1, b >= 1. In this case, src will be treated as being made up of blocks with the same size as *this, and to *this we will add the summation of all of those blocks.

Definition at line 1119 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), SumBlockComponent::Backprop(), SumBlockComponent::Propagate(), kaldi::UnitTestCuMatrixAddMatBlocks1(), kaldi::UnitTestCuMatrixAddMatBlocks1Trans(), kaldi::UnitTestCuMatrixAddMatBlocks2(), ConvolutionComponent::Update(), and Convolutional1dComponent::Update().

| void AddMatDiagVec | ( | const Real | alpha, |

| const CuMatrixBase< Real > & | M, | ||

| MatrixTransposeType | transM, | ||

| CuVectorBase< Real > & | v, | ||

| Real | beta = 1.0 |

||

| ) |

Definition at line 1415 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), BatchNormComponent::Backprop(), LstmNonlinearityComponent::ConsolidateMemory(), SigmoidComponent::RepairGradients(), and TanhComponent::RepairGradients().

| void AddMatMat | ( | Real | alpha, |

| const CuMatrixBase< Real > & | A, | ||

| MatrixTransposeType | transA, | ||

| const CuMatrixBase< Real > & | B, | ||

| MatrixTransposeType | transB, | ||

| Real | beta | ||

| ) |

C = alpha * A(^T)*B(^T) + beta * C.

Definition at line 1291 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::AddMatBlock(), CuBlockMatrix< Real >::AddMatMat(), CuMatrixBase< float >::AddMatSp(), CuMatrixBase< float >::AddMatTp(), CuMatrixBase< float >::AddSpMat(), CuMatrixBase< float >::AddTpMat(), CuMatrixBase< float >::ApplyLog(), TdnnComponent::Backprop(), RepeatedAffineComponent::Backprop(), AffineComponent::Backprop(), LinearComponent::Backprop(), FixedLinearComponent::Backprop(), FixedAffineComponent::Backprop(), LinearTransform::BackpropagateFnc(), AffineTransform::BackpropagateFnc(), RecurrentComponent::BackpropagateFnc(), ConvolutionalComponent::BackpropagateFnc(), LstmProjected::BackpropagateFnc(), BlstmProjected::BackpropagateFnc(), ModelCollapser::CollapseComponentsAffine(), OnlinePreconditioner::ComputeWt1(), OnlineNaturalGradient::ComputeWt1(), LstmNonlinearityComponent::ConsolidateMemory(), kaldi::nnet3::ConstrainOrthonormalInternal(), kaldi::nnet3::time_height_convolution::ConvolveBackwardDataInternal(), kaldi::nnet3::time_height_convolution::ConvolveBackwardParamsInternal(), kaldi::nnet3::time_height_convolution::ConvolveForwardInternal(), kaldi::CuVectorUnitTestAddDiagMatMat(), OnlinePreconditioner::InitOrthonormalSpecial(), kaldi::nnet2::PreconditionDirections(), OnlinePreconditioner::PreconditionDirectionsInternal(), OnlineNaturalGradient::PreconditionDirectionsInternal(), TdnnComponent::Propagate(), AffineComponent::Propagate(), LinearComponent::Propagate(), DctComponent::Propagate(), FixedLinearComponent::Propagate(), FixedAffineComponent::Propagate(), KlHmm::PropagateFnc(), LinearTransform::PropagateFnc(), AffineTransform::PropagateFnc(), RecurrentComponent::PropagateFnc(), Rbm::PropagateFnc(), LstmProjected::PropagateFnc(), BlstmProjected::PropagateFnc(), Rbm::Reconstruct(), OnlineNaturalGradient::ReorthogonalizeRt1(), OnlinePreconditioner::ReorthogonalizeXt1(), kaldi::TestCuMatrixMatMat(), kaldi::UnitTestCuBlockMatrixAddMatMat(), kaldi::UnitTestCuCholesky(), kaldi::UnitTestCuMatrixAddMatMat(), kaldi::UnitTestCuMatrixSymAddMat2(), kaldi::UnitTestCuMatrixSymInvertPosDef(), kaldi::UnitTestCuSpMatrixInvert(), BlockAffineComponentPreconditioned::Update(), TdnnComponent::UpdateSimple(), and BlockAffineComponent::UpdateSimple().

| void AddMatMatElements | ( | const Real | alpha, |

| const CuMatrixBase< Real > & | A, | ||

| const CuMatrixBase< Real > & | B, | ||

| const Real | beta | ||

| ) |

*this = beta * *this + alpha * A .* B (.* element by element multiplication)

Definition at line 1447 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), StatisticsExtractionComponent::Backprop(), LstmNonlinearityComponent::ConsolidateMemory(), StatisticsPoolingComponent::Propagate(), and kaldi::UnitTestCuMatrixSetMatMatDivMat().

| void AddMatSmat | ( | Real | alpha, |

| const CuMatrixBase< Real > & | A, | ||

| const CuSparseMatrix< Real > & | B, | ||

| MatrixTransposeType | transB, | ||

| Real | beta | ||

| ) |

(*this) = alpha * A * op(B) + beta * (*this), where B is sparse and op(B) is either B or trans(B) depending on the 'transB' argument.

This is multiplication of a dense by a sparse matrix. See also AddSmatMat.

Definition at line 1080 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), and kaldi::UnitTextCuMatrixAddMatSmat().

|

inline |

this <– beta*this + alpha*A*B

Definition at line 614 of file cu-matrix.h.

|

inline |

this <– beta*this + alpha*A*B.

Definition at line 641 of file cu-matrix.h.

Referenced by kaldi::UnitTestCuMatrixAddMatTp().

| void AddRowRanges | ( | const CuMatrixBase< Real > & | src, |

| const CuArrayBase< Int32Pair > & | indexes | ||

| ) |

For each row r of this and for each column c, do (*this)(r, c) += src(j, c), where j ranges from indexes[r].first through indexes[r].second - 1.

In general indexes must be >= 0 and < src.NumRows(); but to represent an empty range you may use the pair (-1, -1) or any pair of numbers (i, j) such that i >= j.

Definition at line 2931 of file cu-matrix.cc.

Referenced by StatisticsPoolingComponent::Backprop(), NnetComputer::ExecuteCommand(), StatisticsPoolingComponent::Propagate(), and kaldi::UnitTestCuMatrixAddRowRanges().

| void AddRows | ( | Real | alpha, |

| const CuMatrixBase< Real > & | src, | ||

| const CuArrayBase< MatrixIndexT > & | indexes | ||

| ) |

Does for each row r, this.Row(r) += alpha * src.row(indexes[r]).

If indexes[r] < 0, does not add anything. src.NumCols() must equal this.NumCols()

Definition at line 2766 of file cu-matrix.cc.

Referenced by StatisticsExtractionComponent::Backprop(), and NnetComputer::ExecuteCommand().

| void AddRows | ( | Real | alpha, |

| const CuArrayBase< const Real *> & | src | ||

| ) |

Does for each row r, this.Row(r) += alpha * src[r], treating src[r] as the beginning of a region of memory representing a vector of floats, of the same length as this.NumCols().

Definition at line 2826 of file cu-matrix.cc.

| void AddSmat | ( | Real | alpha, |

| const CuSparseMatrix< Real > & | A, | ||

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

*this += alpha * A.

Definition at line 985 of file cu-matrix.cc.

Referenced by GeneralMatrix::AddToMat(), CuMatrixBase< float >::ApplyLog(), and kaldi::UnitTextCuMatrixAddSmat().

| void AddSmatMat | ( | Real | alpha, |

| const CuSparseMatrix< Real > & | A, | ||

| MatrixTransposeType | transA, | ||

| const CuMatrixBase< Real > & | B, | ||

| Real | beta | ||

| ) |

(*this) = alpha * op(A) * B + beta * (*this), where A is sparse.

Multiplication of sparse with dense matrix. See also AddMatSmat. Note: we recommend, for greatest efficiency, that transA be kNoTrans. Use AddMatSmat() for better efficiency, as 2 dense mat transpose ops are called in this API.

Definition at line 1024 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), and kaldi::UnitTextCuMatrixAddSmatMat().

|

inline |

this <– beta*this + alpha*SpA*B

Definition at line 623 of file cu-matrix.h.

| void AddToDiag | ( | Real | value | ) |

Adds "value" to the diagonal elements of the matrix.

The matrix *this does not have to be square.

Definition at line 604 of file cu-matrix.cc.

Referenced by kaldi::nnet3::ConstrainOrthonormalInternal(), kaldi::nnet2::PreconditionDirections(), kaldi::TestCuMatrixCholesky(), and kaldi::UnitTestCuMatrixAddToDiag().

| void AddToElements | ( | Real | alpha, |

| const CuArrayBase< int32 > & | elements | ||

| ) |

This is a rather special purpose function; we might generalize it later by adding a transpose-type option.

It expects 'elements.Dim()' to equal NumRows(), and for each elements[i] to be either -1, or 0 <= element[i] < NumCols(). It adds alpha to each element (*this)(i, elements[i]) for 0 <= i < NumRows().

Definition at line 3344 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), and kaldi::UnitTestCuMatrixAddToElements().

| void AddToRows | ( | Real | alpha, |

| const CuArrayBase< MatrixIndexT > & | indexes, | ||

| CuMatrixBase< Real > * | dst | ||

| ) | const |

For each row i of *this, adds this->Row(i) to dst->Row(indexes(i)) if indexes(i) >= 0, else do nothing.

Requires that all the indexes[i] that are >= 0 be distinct, otherwise the behavior is undefined.

Definition at line 2869 of file cu-matrix.cc.

Referenced by NnetComputer::ExecuteCommand(), and kaldi::UnitTestCuMatrixAddToRows().

| void AddToRows | ( | Real | alpha, |

| const CuArrayBase< Real *> & | dst | ||

| ) | const |

For each row r of this matrix, adds it (times alpha) to the array of floats at the location given by dst[r], where dst[r] is assumed to be obtained from the RowData() function of another CuMatrix, or from CuVector::Data() (i.e.

it should point to memory on the GPU if we're using a GPU, or on the CPU otherwise). If dst[r] is NULL, does not do anything for that row. Requires that none of the memory regions pointed to by the pointers in "dst" overlap (e.g. none of the pointers should be the same).

Definition at line 2847 of file cu-matrix.cc.

|

inline |

this <– beta*this + alpha*A*B.

Definition at line 632 of file cu-matrix.h.

Referenced by kaldi::UnitTestCuMatrixAddTpMat().

| void AddVecToCols | ( | Real | alpha, |

| const CuVectorBase< Real > & | col, | ||

| Real | beta = 1.0 |

||

| ) |

(for each column c of *this), c = alpha * col + beta * c

Definition at line 1232 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), KlHmm::PropagateFnc(), and kaldi::UnitTestCuMatrixAddVecToCols().

| void AddVecToRows | ( | Real | alpha, |

| const CuVectorBase< Real > & | row, | ||

| Real | beta = 1.0 |

||

| ) |

(for each row r of *this), r = alpha * row + beta * r

Definition at line 1261 of file cu-matrix.cc.

Referenced by DecodableNnetLoopedOnlineBase::AdvanceChunk(), DecodableNnetSimpleLooped::AdvanceChunk(), CuMatrixBase< float >::ApplyLog(), BatchNormComponent::Backprop(), SimpleSentenceAveragingComponent::BackpropagateFnc(), ScaleAndOffsetComponent::BackpropInternal(), NnetBatchComputer::Compute(), DecodableNnet2Online::ComputeForFrame(), DecodableNnetSimple::DoNnetComputation(), SingleUtteranceNnet2DecoderThreaded::ProcessLoglikes(), ConvolutionComponent::Propagate(), BatchNormComponent::Propagate(), FixedAffineComponent::Propagate(), FixedBiasComponent::Propagate(), PerElementOffsetComponent::Propagate(), Convolutional1dComponent::Propagate(), SimpleSentenceAveragingComponent::PropagateFnc(), AffineTransform::PropagateFnc(), RecurrentComponent::PropagateFnc(), Rbm::PropagateFnc(), ConvolutionalComponent::PropagateFnc(), AddShift::PropagateFnc(), ScaleAndOffsetComponent::PropagateInternal(), Rbm::Reconstruct(), SigmoidComponent::RepairGradients(), RectifiedLinearComponent::RepairGradients(), PdfPrior::SubtractOnLogpost(), kaldi::UnitTestCuMatrixAddVecToRows(), and SentenceAveragingComponent::Update().

| void AddVecVec | ( | Real | alpha, |

| const CuVectorBase< Real > & | x, | ||

| const CuVectorBase< Real > & | y | ||

| ) |

A = alpha * x * y^T + A .

Definition at line 1329 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), and kaldi::UnitTestCuMatrixAddVecVec().

|

inline |

Definition at line 455 of file cu-matrix.h.

Referenced by ClipGradientComponent::Backprop(), RecurrentComponent::BackpropagateFnc(), and kaldi::UnitTestCuMatrixApplyCeiling().

|

inline |

Definition at line 459 of file cu-matrix.h.

Referenced by DiscriminativeComputation::Compute(), CuMatrixBase< float >::DiffLogSoftmaxPerRow(), and kaldi::UnitTestCuMatrixApplyExp().

|

inline |

Definition at line 464 of file cu-matrix.h.

Referenced by kaldi::UnitTestCuMatrixApplyExpLimited().

|

inline |

Definition at line 468 of file cu-matrix.h.

Referenced by kaldi::UnitTestCuMatrixApplyExpSpecial().

|

inline |

Definition at line 451 of file cu-matrix.h.

Referenced by ClipGradientComponent::Backprop(), RecurrentComponent::BackpropagateFnc(), DecodableNnet2Online::ComputeForFrame(), main(), SingleUtteranceNnet2DecoderThreaded::ProcessLoglikes(), StatisticsPoolingComponent::Propagate(), RectifiedLinearComponent::Propagate(), SoftmaxComponent::Propagate(), LogSoftmaxComponent::Propagate(), ClipGradientComponent::RepairGradients(), RestrictedAttentionComponent::StoreStats(), kaldi::TestCuMatrixCompObjfAndDeriv(), kaldi::UnitTestCuMatrixApplyFloor(), kaldi::UnitTestCuMatrixObjfDeriv(), and kaldi::UnitTestCuMatrixSetMatMatDivMat().

|

inline |

Definition at line 447 of file cu-matrix.h.

Referenced by BackpropTruncationComponent::Backprop(), RectifiedLinearComponent::Backprop(), LstmNonlinearityComponent::ConsolidateMemory(), GeneralDropoutComponent::GetMemo(), DropoutMaskComponent::Propagate(), DropoutComponent::Propagate(), SigmoidComponent::RepairGradients(), TanhComponent::RepairGradients(), ClipGradientComponent::RepairGradients(), kaldi::TestCuMatrixHeaviside(), and kaldi::UnitTestCuMatrixApplyHeaviside().

|

inline |

Definition at line 480 of file cu-matrix.h.

Referenced by DecodableNnet2Online::ComputeForFrame(), main(), SingleUtteranceNnet2DecoderThreaded::ProcessLoglikes(), RestrictedAttentionComponent::StoreStats(), kaldi::TestCuMatrixCompObjfAndDeriv(), kaldi::UnitTestCuMatrixApplyLog(), and kaldi::UnitTestCuMatrixObjfDeriv().

|

inline |

Definition at line 476 of file cu-matrix.h.

|

inline |

Definition at line 438 of file cu-matrix.h.

Referenced by TanhComponent::Backprop(), LstmNonlinearityComponent::ConsolidateMemory(), kaldi::MeanVariance(), StatisticsExtractionComponent::Propagate(), StatisticsPoolingComponent::Propagate(), TanhComponent::StoreStats(), kaldi::UnitTestCuMatrixApplyPow(), kaldi::UnitTestCuMatrixSetRandn(), and kaldi::UnitTestCuMatrixSetRandUniform().

|

inline |

Definition at line 443 of file cu-matrix.h.

Referenced by PowerComponent::Backprop(), PowerComponent::Propagate(), ClipGradientComponent::RepairGradients(), and kaldi::UnitTestCuMatrixApplyPowAbs().

|

inline |

Definition at line 472 of file cu-matrix.h.

| bool ApproxEqual | ( | const CuMatrixBase< Real > & | other, |

| float | tol = 0.01 |

||

| ) | const |

True if ((*this)-other).FrobeniusNorm() <= tol * this->FrobeniusNorm()

Definition at line 2137 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::FrobeniusNorm(), kaldi::UnitTestCuCholesky(), and kaldi::UnitTestCuCopy().

| void Ceiling | ( | const CuMatrixBase< Real > & | src, |

| Real | ceiling_val | ||

| ) |

Definition at line 2601 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyCeiling(), and CuMatrixBase< float >::SizeInBytes().

| void Cholesky | ( | CuMatrixBase< Real > * | inv_cholesky = NULL | ) |

This function does sets *this to the Cholesky factor of *this (i.e.

the C satisfying *this = C C^T), and sets "inv_cholesky" (if supplied) to its inverse. *this is treated as a symmetric matrix but only the lower triangle is accessed.

Definition at line 1987 of file cu-matrix.cc.

Referenced by CuTpMatrix< Real >::Cholesky(), CuMatrixBase< float >::Cholesky(), CuMatrixBase< float >::SizeInBytes(), kaldi::TestCuMatrixCholesky(), kaldi::UnitTestCholesky(), and kaldi::UnitTestCuCholesky().

|

inline |

Definition at line 665 of file cu-matrix.h.

Referenced by ConvolutionComponent::Backprop(), StatisticsExtractionComponent::Backprop(), StatisticsPoolingComponent::Backprop(), MaxpoolingComponent::Backprop(), BlockAffineComponent::Backprop(), Convolutional1dComponent::Backprop(), MaxPoolingComponent::BackpropagateFnc(), AveragePoolingComponent::BackpropagateFnc(), BlockSoftmax::BackpropagateFnc(), ParallelComponent::BackpropagateFnc(), SentenceAveragingComponent::BackpropagateFnc(), MultiBasisComponent::BackpropagateFnc(), ConvolutionalComponent::BackpropagateFnc(), BlstmProjected::BackpropagateFnc(), MultiTaskLoss::Eval(), ConvolutionComponent::Propagate(), StatisticsExtractionComponent::Propagate(), StatisticsPoolingComponent::Propagate(), MaxpoolingComponent::Propagate(), BlockAffineComponent::Propagate(), Convolutional1dComponent::Propagate(), MaxPoolingComponent::PropagateFnc(), AveragePoolingComponent::PropagateFnc(), BlockSoftmax::PropagateFnc(), FramePoolingComponent::PropagateFnc(), ParallelComponent::PropagateFnc(), SentenceAveragingComponent::PropagateFnc(), ConvolutionalComponent::PropagateFnc(), MultiBasisComponent::PropagateFnc(), BlstmProjected::PropagateFnc(), kaldi::UnitTestLstmNonlinearity(), ConvolutionComponent::Update(), FramePoolingComponent::Update(), SentenceAveragingComponent::Update(), ConvolutionalComponent::Update(), NaturalGradientRepeatedAffineComponent::Update(), and Convolutional1dComponent::Update().

| void CopyColFromVec | ( | const CuVectorBase< Real > & | v, |

| const MatrixIndexT | col | ||

| ) |

Copy vector into specific column of matrix.

Definition at line 2414 of file cu-matrix.cc.

Referenced by kaldi::cu::NormalizePerRow(), StatisticsExtractionComponent::Propagate(), DropoutMaskComponent::Propagate(), CuMatrixBase< float >::SizeInBytes(), NaturalGradientRepeatedAffineComponent::Update(), and TimeHeightConvolutionComponent::UpdateNaturalGradient().

| void CopyCols | ( | const CuMatrixBase< Real > & | src, |

| const CuArrayBase< MatrixIndexT > & | indexes | ||

| ) |

Copies column r from column indexes[r] of src.

As a special case, if indexes[i] == -1, sets column i to zero indexes.size() must equal this->NumCols(), and src.NumRows() must equal this.NumRows()

Definition at line 2656 of file cu-matrix.cc.

Referenced by SumGroupComponent::Backprop(), PermuteComponent::Backprop(), kaldi::nnet3::time_height_convolution::ConvolveBackwardParamsInternal(), kaldi::nnet3::time_height_convolution::ConvolveForwardInternal(), ConvolutionComponent::InputToInputPatches(), MaxpoolingComponent::InputToInputPatches(), PermuteComponent::Propagate(), Convolutional1dComponent::Propagate(), and Convolutional1dComponent::Update().

| void CopyColsFromVec | ( | const CuVectorBase< Real > & | v | ) |

Copies vector into matrix, column-by-column.

Note that rv.Dim() must either equal NumRows()*NumCols() or NumRows(); this has two modes of operation.

Definition at line 2376 of file cu-matrix.cc.

Referenced by DropoutComponent::Propagate(), CuMatrixBase< float >::SizeInBytes(), and kaldi::UnitTestCuMatrixCopyColsFromVec().

| void CopyFromBlock | ( | const CuBlockMatrix< Real > & | B, |

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

Definition at line 161 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::AddMatTp().

| void CopyFromGeneralMat | ( | const GeneralMatrix & | src, |

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

Definition at line 3096 of file cu-matrix.cc.

Referenced by NnetComputer::AcceptInputs(), kaldi::nnet3::ComputeObjectiveFunction(), and CuMatrixBase< float >::SizeInBytes().

| void CopyFromMat | ( | const MatrixBase< OtherReal > & | src, |

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

Definition at line 344 of file cu-matrix.cc.

Referenced by kaldi::nnet3::attention::AttentionForward(), ElementwiseProductComponent::Backprop(), BatchNormComponent::Backprop(), BackpropTruncationComponent::Backprop(), TanhComponent::Backprop(), PowerComponent::Backprop(), RectifiedLinearComponent::Backprop(), ScaleComponent::Backprop(), GeneralDropoutComponent::Backprop(), SpecAugmentTimeMaskComponent::Backprop(), FixedScaleComponent::Backprop(), FixedBiasComponent::Backprop(), NoOpComponent::Backprop(), ClipGradientComponent::Backprop(), PerElementScaleComponent::Backprop(), PerElementOffsetComponent::Backprop(), Softmax::BackpropagateFnc(), HiddenSoftmax::BackpropagateFnc(), BlockSoftmax::BackpropagateFnc(), ParallelComponent::BackpropagateFnc(), SentenceAveragingComponent::BackpropagateFnc(), LengthNormComponent::BackpropagateFnc(), MultiBasisComponent::BackpropagateFnc(), Dropout::BackpropagateFnc(), AddShift::BackpropagateFnc(), Rescale::BackpropagateFnc(), ScaleAndOffsetComponent::BackpropInternal(), BlockAffineComponent::BlockAffineComponent(), NnetOnlineComputer::Compute(), LstmNonlinearityComponent::ConsolidateMemory(), kaldi::nnet3::ConstrainOrthonormal(), kaldi::nnet3::time_height_convolution::ConvolveForwardSimple(), CuMatrixBase< float >::CopyFromBlock(), CuBlockMatrix< Real >::CopyFromMat(), GeneralMatrix::CopyToMat(), CuMatrixBase< float >::DiffLogSoftmaxPerRow(), CuMatrixBase< float >::DiffSoftmaxPerRow(), NnetComputer::ExecuteCommand(), NnetRescaler::FormatInput(), NnetBatchComputer::FormatInputs(), kaldi::nnet3::attention::GetAttentionDotProducts(), GeneralDropoutComponent::GetMemo(), main(), kaldi::nnet2::NnetComputation(), kaldi::nnet2::NnetComputationChunked(), kaldi::cu::NormalizePerRow(), CuMatrix< float >::operator=(), kaldi::nnet2::PreconditionDirections(), OnlinePreconditionerSimple::PreconditionDirections(), OnlineNaturalGradientSimple::PreconditionDirections(), kaldi::nnet2::PreconditionDirectionsAlphaRescaled(), DropoutComponent::Propagate(), ElementwiseProductComponent::Propagate(), BatchNormComponent::Propagate(), BackpropTruncationComponent::Propagate(), PowerComponent::Propagate(), RectifiedLinearComponent::Propagate(), ScaleComponent::Propagate(), GeneralDropoutComponent::Propagate(), SpecAugmentTimeMaskComponent::Propagate(), SpliceMaxComponent::Propagate(), NoOpComponent::Propagate(), ClipGradientComponent::Propagate(), FixedScaleComponent::Propagate(), PerElementScaleComponent::Propagate(), FixedBiasComponent::Propagate(), PerElementOffsetComponent::Propagate(), AdditiveNoiseComponent::Propagate(), KlHmm::PropagateFnc(), ParallelComponent::PropagateFnc(), LengthNormComponent::PropagateFnc(), Dropout::PropagateFnc(), LstmProjected::PropagateFnc(), AddShift::PropagateFnc(), Rescale::PropagateFnc(), BlstmProjected::PropagateFnc(), ScaleAndOffsetComponent::PropagateInternal(), kaldi::nnet1::RandGauss(), CuRand< float >::RandGaussian(), CuRand< float >::RandUniform(), kaldi::nnet1::RandUniform(), OnlineNaturalGradient::ReorthogonalizeRt1(), OnlinePreconditioner::ReorthogonalizeXt1(), CuMatrixBase< float >::SizeInBytes(), NnetBatchComputer::SplitUtteranceIntoTasks(), kaldi::TestCuFindRowMaxId(), kaldi::TestCuMatrixTransposeCross(), kaldi::nnet3::TestSimpleComponentPropagateProperties(), kaldi::TestSymInvertPosDef(), NoOpTransform::TrainingForward(), AppendTransform::TrainingForward(), SimpleMeanTransform::TrainingForward(), kaldi::UnitInvert(), kaldi::UnitTestCheck(), kaldi::UnitTestCholesky(), kaldi::UnitTestConstructor(), kaldi::UnitTestCopyFromMat(), kaldi::UnitTestCopySp(), kaldi::UnitTestCuCopy(), kaldi::UnitTestCuDiffLogSoftmax(), kaldi::UnitTestCuDiffNormalizePerRow(), kaldi::UnitTestCuDiffSigmoid(), kaldi::UnitTestCuDiffSoftmax(), kaldi::UnitTestCuDiffTanh(), kaldi::UnitTestCuDiffXent(), kaldi::UnitTestCuFindRowMaxId(), kaldi::UnitTestCuLogSoftmax(), kaldi::UnitTestCuMathNormalizePerRow(), kaldi::UnitTestCuMathNormalizePerRow_v2(), kaldi::UnitTestCuMatrixAddMat(), kaldi::UnitTestCuMatrixAddMatDiagVec(), kaldi::UnitTestCuMatrixAddMatMat(), kaldi::UnitTestCuMatrixAddMatMatBatched(), kaldi::UnitTestCuMatrixAddMatMatElements(), kaldi::UnitTestCuMatrixAddVecToCols(), kaldi::UnitTestCuMatrixAddVecToRows(), kaldi::UnitTestCuMatrixCopyCross(), kaldi::UnitTestCuMatrixCopyCross2(), kaldi::UnitTestCuMatrixCopyFromMat(), kaldi::UnitTestCuMatrixDiffGroupPnorm(), kaldi::UnitTestCuMatrixDivElements(), kaldi::UnitTestCuMatrixDivRowsVec(), kaldi::UnitTestCuMatrixGroupMaxDeriv(), kaldi::UnitTestCuMatrixInvertElements(), kaldi::UnitTestCuMatrixMax(), kaldi::UnitTestCuMatrixMin(), kaldi::UnitTestCuMatrixMulColsVec(), kaldi::UnitTestCuMatrixMulElements(), kaldi::UnitTestCuMatrixMulRowsGroupMat(), kaldi::UnitTestCuMatrixMulRowsVec(), kaldi::UnitTestCuSigmoid(), kaldi::UnitTestCuSoftmax(), kaldi::UnitTestCuTanh(), kaldi::UnitTestCuVectorAddColSumMat(), kaldi::UnitTestCuVectorAddColSumMatLarge(), kaldi::UnitTestCuVectorAddRowSumMat(), kaldi::UnitTestCuVectorAddRowSumMatLarge(), kaldi::UnitTestInvert(), kaldi::UnitTestSwapCu2Cu(), kaldi::UnitTestSwapCu2M(), and BlockAffineComponentPreconditioned::Update().

| void CopyFromMat | ( | const MatrixBase< Real > & | src, |

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

Definition at line 314 of file cu-matrix.cc.

| void CopyFromMat | ( | const CuMatrixBase< OtherReal > & | M, |

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

Definition at line 208 of file cu-matrix.cc.

| void CopyFromSp | ( | const CuSpMatrix< Real > & | M | ) |

Definition at line 360 of file cu-matrix.cc.

Referenced by CuMatrix< float >::CuMatrix(), CuSpMatrix< Real >::Invert(), CuMatrixBase< float >::SizeInBytes(), and kaldi::TestCuMatrixCopyFromSp().

| template void CopyFromTp | ( | const CuTpMatrix< OtherReal > & | M, |

| MatrixTransposeType | trans = kNoTrans |

||

| ) |

Definition at line 280 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::Cholesky(), CuMatrix< float >::CuMatrix(), CuTpMatrix< Real >::Invert(), CuMatrixBase< float >::SizeInBytes(), kaldi::TestCuMatrixCopyFromTp(), and kaldi::UnitTestCuMatrixCopyFromTp().

| void CopyLowerToUpper | ( | ) |

Definition at line 2969 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::AddMatTp(), kaldi::nnet3::ConstrainOrthonormalInternal(), kaldi::nnet2::PreconditionDirections(), kaldi::TestCuMatrixCopyLowerToUpper(), kaldi::UnitTestCuCholesky(), and kaldi::UnitTestCuMatrixCopyLowerToUpper().

| void CopyRangeFromMatClamped | ( | const CuMatrixBase< Real > & | src, |

| int32_t | start_range, | ||

| int32_t | end_range, | ||

| int32_t | clamp_low, | ||

| int32_t | clamp_high | ||

| ) |

Definition at line 419 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::SizeInBytes().

| void CopyRows | ( | const CuMatrixBase< Real > & | src, |

| const CuArrayBase< MatrixIndexT > & | indexes | ||

| ) |

Copies row r from row indexes[r] of src.

As a special case, if indexes[i] < 0, sets row i to zero. src.NumCols() must equal this.NumCols()

Definition at line 2678 of file cu-matrix.cc.

Referenced by StatisticsExtractionComponent::Backprop(), SpliceComponent::Backprop(), NnetComputer::ExecuteCommand(), main(), DistributeComponent::Propagate(), and SpliceMaxComponent::Propagate().

| void CopyRows | ( | const CuArrayBase< const Real *> & | src | ) |

Copies row r of this matrix from an array of floats at the location given by src[r], where src[r] is assumed to be obtained from the RowData() function of another CuMatrix, or from CuVector::Data() (the point is: the data it points to should be on the GPU if we're using a GPU, and on a CPU otherwise).

src.size() must equal this.NumRows(), and if any src[r] is NULL then this.Row(r) will be set to zero.

Definition at line 2723 of file cu-matrix.cc.

| void CopyRowsFromVec | ( | const CuVectorBase< Real > & | v | ) |

This function has two modes of operation.

If v.Dim() == NumRows() * NumCols(), then treats the vector as a row-by-row concatenation of a matrix and copies to *this. if v.Dim() == NumCols(), it sets each row of *this to a copy of v.

Definition at line 2301 of file cu-matrix.cc.

Referenced by kaldi::CuVectorUnitTestCopyFromMat(), NnetOnlineComputer::Flush(), NnetRescaler::FormatInput(), TimeHeightConvolutionComponent::Propagate(), TdnnComponent::Propagate(), RepeatedAffineComponent::Propagate(), ConstantComponent::Propagate(), AffineComponent::Propagate(), FixedAffineComponent::Propagate(), BlockAffineComponent::Propagate(), ConstantFunctionComponent::Propagate(), CuMatrixBase< float >::SizeInBytes(), and kaldi::UnitTestCuMatrixCopyRowsFromVec().

| void CopyRowsFromVec | ( | const VectorBase< Real > & | v | ) |

Version of CopyRowsFromVec() that takes a CPU-based vector.

Definition at line 2336 of file cu-matrix.cc.

| template void CopyToMat | ( | MatrixBase< OtherReal > * | dst, |

| MatrixTransposeType | trans = kNoTrans |

||

| ) | const |

Definition at line 447 of file cu-matrix.cc.

Referenced by NnetComputerFromEg::Compute(), CuMatrixBase< float >::CopyToMat(), kaldi::nnet1::MomentStatistics(), kaldi::operator<<(), KlHmm::PropagateFnc(), CuMatrixBase< float >::SizeInBytes(), kaldi::UnitInvert(), kaldi::UnitTestCholesky(), kaldi::UnitTestCuDiffLogSoftmax(), kaldi::UnitTestCuDiffSigmoid(), kaldi::UnitTestCuDiffSoftmax(), kaldi::UnitTestCuDiffTanh(), kaldi::UnitTestCuDiffXent(), kaldi::UnitTestCuMatrixAddMat(), kaldi::UnitTestCuMatrixAddMatMat(), kaldi::UnitTestCuMatrixAddVecToCols(), kaldi::UnitTestCuMatrixAddVecToRows(), kaldi::UnitTestCuMatrixAddVecVec(), kaldi::UnitTestCuMatrixDiffGroupPnorm(), kaldi::UnitTestCuMatrixDivElements(), kaldi::UnitTestCuMatrixDivRowsVec(), kaldi::UnitTestCuMatrixGroupMaxDeriv(), kaldi::UnitTestCuMatrixInvertElements(), kaldi::UnitTestCuMatrixMax(), kaldi::UnitTestCuMatrixMin(), kaldi::UnitTestCuMatrixMulColsVec(), kaldi::UnitTestCuMatrixMulElements(), kaldi::UnitTestCuMatrixMulRowsGroupMat(), kaldi::UnitTestCuMatrixMulRowsVec(), kaldi::UnitTestCuSigmoid(), kaldi::UnitTestCuTanh(), kaldi::UnitTestInvert(), kaldi::UnitTestMatrix(), UnitTestMatrixRandomizer(), kaldi::UnitTestSetZeroAboveDiag(), kaldi::UnitTestSwapCu2Cu(), and kaldi::UnitTestSwapCu2M().

| void CopyToRows | ( | const CuArrayBase< Real *> & | dst | ) | const |

For each row r of this matrix, copies it to the array of floats at the location given by dst[r], where dst[r] is assumed to be obtained from the RowData() function of another CuMatrix, or from CuVector::Data() (i.e.

it should point to memory on the GPU if we're using a GPU, or on the CPU otherwise). If dst[r] is NULL, does not copy anywhere. Requires that none of the memory regions pointed to by the pointers in "dst" overlap (e.g. none of the pointers should be the same).

Definition at line 2744 of file cu-matrix.cc.

Referenced by DistributeComponent::Backprop(), NnetComputer::ExecuteCommand(), and kaldi::UnitTestCuMatrixCopyToRows().

| void CopyUpperToLower | ( | ) |

Definition at line 2990 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::AddMatTp(), kaldi::TestCuMatrixCopyUpperToLower(), and kaldi::UnitTestCuMatrixCopyUpperToLower().

|

inline |

Return data pointer (const).

Warning: may return a pointer to GPU memory. Use at your own risk.

Definition at line 746 of file cu-matrix.h.

Referenced by CuMatrixBase< float >::AddCols(), CuVectorBase< float >::AddColSumMat(), CuVectorBase< float >::AddDiagMatMat(), CuMatrixBase< float >::AddDiagVecMat(), CuSpMatrix< Real >::AddMat2(), CuMatrixBase< float >::AddMatBlock(), CuMatrixBase< float >::AddMatDiagVec(), CuBlockMatrix< Real >::AddMatMat(), CuMatrixBase< float >::AddMatMatElements(), CuMatrixBase< float >::AddMatSmat(), CuVectorBase< float >::AddMatVec(), CuMatrixBase< float >::AddRowRanges(), CuMatrixBase< float >::AddRows(), CuVectorBase< float >::AddRowSumMat(), CuMatrixBase< float >::AddSmatMat(), CuMatrixBase< float >::AddToRows(), NormalizeComponent::Backprop(), BatchNormComponent::Backprop(), RepeatedAffineComponent::Backprop(), GeneralDropoutComponent::Backprop(), PerElementScaleComponent::Backprop(), PerElementOffsetComponent::Backprop(), ScaleAndOffsetComponent::Backprop(), ScaleAndOffsetComponent::BackpropInternal(), kaldi::cu::BackpropLstmNonlinearity(), CuMatrix< float >::CompObjfAndDeriv(), DistributeComponent::ComputeInputPointers(), kaldi::cu::ComputeLstmNonlinearity(), kaldi::nnet3::time_height_convolution::ConvolveBackwardData(), kaldi::nnet3::time_height_convolution::ConvolveBackwardDataInternal(), kaldi::nnet3::time_height_convolution::ConvolveBackwardParams(), kaldi::nnet3::time_height_convolution::ConvolveBackwardParamsInternal(), kaldi::nnet3::time_height_convolution::ConvolveForward(), kaldi::nnet3::time_height_convolution::ConvolveForwardInternal(), kaldi::cu::Copy(), CuVectorBase< float >::CopyColFromMat(), CuMatrixBase< float >::CopyCols(), CuVectorBase< float >::CopyDiagFromMat(), CuVectorBase< float >::CopyElements(), CuTpMatrix< Real >::CopyFromMat(), CuCompressedMatrix< I >::CopyFromMat(), CuSpMatrix< Real >::CopyFromMat(), CuMatrixBase< float >::CopyFromMat(), CuMatrixBase< float >::CopyRangeFromMatClamped(), CuMatrixBase< float >::CopyRows(), CuVectorBase< float >::CopyRowsFromMat(), VectorBase< float >::CopyRowsFromMat(), CuSparseMatrix< Real >::CopyToMat(), CuCompressedMatrix< I >::CopyToMat(), CuMatrixBase< float >::DiffGroupPnorm(), CuMatrixBase< float >::DiffLogSoftmaxPerRow(), kaldi::cu::DiffNormalizePerRow(), CuMatrixBase< float >::DiffSoftmaxPerRow(), kaldi::cu::EnsureNonzero(), CuMatrixBase< float >::EqualElementMask(), NnetBatchComputer::FormatInputs(), NnetBatchComputer::FormatOutputs(), TdnnComponent::GetInputPart(), NnetComputer::GetPointers(), CuMatrixBase< float >::GroupMaxDeriv(), CuTpMatrix< Real >::Invert(), kaldi::nnet3::MergeTaskOutput(), CuMatrixBase< float >::MulRows(), kaldi::cu::NormalizePerRow(), NormalizeComponent::Propagate(), BatchNormComponent::Propagate(), TimeHeightConvolutionComponent::Propagate(), RepeatedAffineComponent::Propagate(), GeneralDropoutComponent::Propagate(), PerElementOffsetComponent::Propagate(), ScaleAndOffsetComponent::Propagate(), ScaleAndOffsetComponent::PropagateInternal(), CuRand< float >::RandGaussian(), kaldi::cu::Randomize(), CuRand< float >::RandUniform(), kaldi::cu::RegularizeL1(), RectifiedLinearComponent::RepairGradients(), CuBlockMatrix< Real >::SetCudaData(), kaldi::cu::Splice(), BatchNormComponent::StoreStats(), CuMatrixBase< float >::SumColumnRanges(), CuMatrixBase< float >::SymAddMat2(), kaldi::TraceMatMat(), kaldi::TraceMatSmat(), RepeatedAffineComponent::Update(), NaturalGradientRepeatedAffineComponent::Update(), TimeHeightConvolutionComponent::UpdateNaturalGradient(), and TimeHeightConvolutionComponent::UpdateSimple().

|

inline |

Return data pointer.

Warning: may return a pointer to GPU memory. Use at your own risk.

Definition at line 749 of file cu-matrix.h.

| void DiffGroupPnorm | ( | const CuMatrixBase< Real > & | in_value, |

| const CuMatrixBase< Real > & | out_value, | ||

| const CuMatrixBase< Real > & | out_deriv, | ||

| Real | power | ||

| ) |

Differentiate backward through the GroupPnorm function.

It is a combination of GroupPnormDeriv and MulRowsGroupMat.

Definition at line 841 of file cu-matrix.cc.

Referenced by PnormComponent::Backprop(), CuMatrixBase< float >::SizeInBytes(), and kaldi::UnitTestCuMatrixDiffGroupPnorm().

| void DiffLogSoftmaxPerRow | ( | const CuMatrixBase< Real > & | out_value, |

| const CuMatrixBase< Real > & | out_deriv | ||

| ) |

Differentiate backward through the log softmax function.

Here, "out_value" is the log softmax output. Does, for each row i, *this(i) = out_deriv(i) - sum(out_deriv(i)) .* exp(out_value(i)) xxxx(i) is row-vector. Supports in-place operation, this == &out_deriv.

Definition at line 1903 of file cu-matrix.cc.

Referenced by LogSoftmaxComponent::Backprop(), CuMatrixBase< float >::DiffLogSoftmaxPerRow(), CuMatrixBase< float >::SizeInBytes(), and kaldi::UnitTestCuDiffLogSoftmax().

| void DiffParametricRelu | ( | const CuMatrixBase< Real > & | value, |

| const CuMatrixBase< Real > & | diff, | ||

| const CuVectorBase< Real > & | alpha, | ||

| const CuVectorBase< Real > & | beta | ||

| ) |

Differentiate backward through the parametric relu function.

Here the "value" is the Relu input. Does, element-by-element. *this = diff * (value > 0 ? alpha : beta)

Definition at line 1501 of file cu-matrix.cc.

Referenced by ParametricRelu::BackpropagateFnc(), and CuMatrixBase< float >::SizeInBytes().

| void DiffSigmoid | ( | const CuMatrixBase< Real > & | value, |

| const CuMatrixBase< Real > & | diff | ||

| ) |

Differentiate backward through the sigmoid function.

Here, "value" is the sigmoid output. Does, element-by-element, *this = diff * value * (1 - value).

Definition at line 1764 of file cu-matrix.cc.

Referenced by SigmoidComponent::Backprop(), Sigmoid::BackpropagateFnc(), CuMatrixBase< float >::SizeInBytes(), and kaldi::UnitTestCuDiffSigmoid().

| void DiffSoftmaxPerRow | ( | const CuMatrixBase< Real > & | value, |

| const CuMatrixBase< Real > & | diff | ||

| ) |

Differentiate backward through the softmax function.

Here, "value" is the softmax output. Does, for each row i, *this(i) = diff(i) * diag(value(i)) - diff(i) * (value(i)^T * value(i)) xxxx(i) is row-vector; '*' and '-' are matrix operations. Supports in-place operation, this == &diff.

Definition at line 1868 of file cu-matrix.cc.

Referenced by kaldi::nnet3::attention::AttentionBackward(), SoftmaxComponent::Backprop(), CuMatrixBase< float >::SizeInBytes(), and kaldi::UnitTestCuDiffSoftmax().

| void DiffTanh | ( | const CuMatrixBase< Real > & | value, |

| const CuMatrixBase< Real > & | diff | ||

| ) |

Differentiate backward through the tanh function.

Here, "value" is the tanh output. Does, element-by-element, *this = diff * (1 - value^2).

Definition at line 1809 of file cu-matrix.cc.

Referenced by TanhComponent::Backprop(), RecurrentComponent::BackpropagateFnc(), Tanh::BackpropagateFnc(), LstmNonlinearityComponent::ConsolidateMemory(), CuMatrixBase< float >::SizeInBytes(), and kaldi::UnitTestCuDiffTanh().

| void DiffXent | ( | const CuArrayBase< int32 > & | tgt, |

| CuVector< Real > * | log_post_tgt | ||

| ) |

Differentiate the block [softmax+cross-entropy] : dE/da = posterior_mat - target_mat, 'E' is error function, 'a' is activation on softmax input.

Interface: tgt ... index vector, encodes the matrix of targets net_out_or_diff ... before invocation net output, after diff dE/da log_post_tgt ... per-frame statistics for cross-entropy computations : log(sum_row(posterior_mat .* target_mat))

Definition at line 1957 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::SizeInBytes(), and kaldi::UnitTestCuDiffXent().

|

inline |

Definition at line 221 of file cu-matrix.h.

Referenced by CuVectorBase< float >::AddColSumMat(), CuVectorBase< float >::AddDiagMatMat(), CuMatrixBase< float >::AddMatBlocks(), CuMatrixBase< float >::AddRowRanges(), CuVectorBase< float >::AddRowSumMat(), CuMatrix< float >::CompObjfAndDeriv(), LstmNonlinearityComponent::ConsolidateMemory(), kaldi::cu::Copy(), CuVectorBase< float >::CopyColFromMat(), CuTpMatrix< Real >::CopyFromMat(), CuCompressedMatrix< I >::CopyFromMat(), CuSpMatrix< Real >::CopyFromMat(), CuMatrixBase< float >::CopyFromMat(), CuSparseMatrix< Real >::CopyToMat(), CuCompressedMatrix< I >::CopyToMat(), kaldi::cu::DiffNormalizePerRow(), kaldi::cu::EnsureNonzero(), CuTpMatrix< Real >::Invert(), kaldi::cu::NormalizePerRow(), kaldi::cu::Randomize(), kaldi::cu::RegularizeL1(), CuBlockMatrix< Real >::SetCudaData(), kaldi::cu::Splice(), NonlinearComponent::StoreStatsInternal(), CuMatrixBase< float >::SumColumnRanges(), kaldi::TraceMatMat(), kaldi::TraceMatSmat(), and NonlinearComponent::UpdateStats().

| void DivElements | ( | const CuMatrixBase< Real > & | A | ) |

Divide two matrices elementwise: C = A ./ A.

Definition at line 691 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), CuVectorBase< float >::DivElements(), kaldi::UnitTestCuMatrixDivElements(), and kaldi::UnitTestCuMatrixSetMatMatDivMat().

| void DivRowsVec | ( | const CuVectorBase< Real > & | div | ) |

divide i'th row by scale[i]

Definition at line 899 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), StatisticsPoolingComponent::Backprop(), StatisticsPoolingComponent::Propagate(), kaldi::TestCuMatrixDivRowsVec(), and kaldi::UnitTestCuMatrixDivRowsVec().

| void EqualElementMask | ( | const CuMatrixBase< Real > & | mat, |

| CuMatrix< Real > * | mask | ||

| ) | const |

Definition at line 3429 of file cu-matrix.cc.

Referenced by MaxpoolingComponent::Backprop(), MaxPoolingComponent::BackpropagateFnc(), and CuMatrixBase< float >::operator()().

| void Exp | ( | const CuMatrixBase< Real > & | src | ) |

Definition at line 2456 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyExp(), and CuMatrixBase< float >::SizeInBytes().

| void ExpLimited | ( | const CuMatrixBase< Real > & | src, |

| Real | lower_limit, | ||

| Real | upper_limit | ||

| ) |

This is equivalent to running: Floor(src, lower_limit); Ceiling(src, upper_limit); Exp(src)

Definition at line 2541 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyExpLimited(), and CuMatrixBase< float >::SizeInBytes().

| void ExpSpecial | ( | const CuMatrixBase< Real > & | src | ) |

For each element x of the matrix, set it to (x < 0 ? exp(x) : x + 1).

This function is used in our RNNLM training.

Definition at line 2563 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyExpSpecial(), and CuMatrixBase< float >::SizeInBytes().

Find the id of the maximal element for each row (resizes the 'id' array to the appropriate size).

Definition at line 1829 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), kaldi::nnet3::ComputeAccuracy(), NnetUpdater::ComputeTotAccuracy(), Xent::Eval(), kaldi::TestCuFindRowMaxId(), and kaldi::UnitTestCuFindRowMaxId().

| void Floor | ( | const CuMatrixBase< Real > & | src, |

| Real | floor_val | ||

| ) |

Definition at line 2582 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyFloor(), and CuMatrixBase< float >::SizeInBytes().

|

inline |

Definition at line 226 of file cu-matrix.h.

Referenced by CuMatrixBase< float >::ApproxEqual(), kaldi::nnet3::ConstrainOrthonormalInternal(), and kaldi::UnitTestCuSparseMatrixFrobeniusNorm().

| void GroupMax | ( | const CuMatrixBase< Real > & | src | ) |

Apply the function y(i) = (max_{j = i*G}^{(i+1)*G-1} x_j where G = x.NumCols() / y.NumCols() must be an integer.

[note: y corresponds to *this and x to src, so src.NumCols() / this->NumCols() must be an integer.

Definition at line 1617 of file cu-matrix.cc.

Referenced by MaxoutComponent::Propagate(), CuMatrixBase< float >::SizeInBytes(), kaldi::TestCuMatrixGroupMax(), kaldi::TestCuMatrixGroupMaxAllGroupSizes(), and kaldi::UnitTestCuMatrixGroupMax().

| void GroupMaxDeriv | ( | const CuMatrixBase< Real > & | input, |

| const CuMatrixBase< Real > & | output | ||

| ) |

Calculate derivatives for the GroupMax function above, where "input" is the input to the GroupMax function above (i.e.

the "src" variable), and "output" is the result of the computation (i.e. the "this" of that function call), and *this must have the same dimension as "input". Each element of *this will be set to 1 if the corresponding input equals the output of the group, and 0 otherwise. The equals the function derivative where it is defined (it's not defined where multiple inputs in the group are equal to the output).

Definition at line 874 of file cu-matrix.cc.

Referenced by MaxoutComponent::Backprop(), CuMatrixBase< float >::SizeInBytes(), kaldi::TestCuMatrixGroupMaxDeriv(), and kaldi::UnitTestCuMatrixGroupMaxDeriv().

| void GroupPnorm | ( | const CuMatrixBase< Real > & | src, |

| Real | pow | ||

| ) |

Apply the function y(i) = (sum_{j = i*G}^{(i+1)*G-1} x_j ^ (power)) ^ (1 / p) where G = x.NumCols() / y.NumCols() must be an integer.

[note: y corresponds to *this and x to src, so src.NumCols() / this->NumCols() must be an integer.

Definition at line 1576 of file cu-matrix.cc.

Referenced by PnormComponent::Propagate(), CuMatrixBase< float >::SizeInBytes(), kaldi::TestCuMatrixDiffGroupPnorm(), kaldi::TestCuMatrixGroupPnorm(), and kaldi::UnitTestCuMatrixGroupPnorm().

| void Heaviside | ( | const CuMatrixBase< Real > & | src | ) |

Set each element to the Heaviside function of the corresponding element of "src", which we define as the function (x > 0 ? 1.0 : 0.0) [note: in general, there are different ways to deal with the situation when x==0.

]

Definition at line 2435 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyHeaviside(), RectifiedLinearComponent::Backprop(), CuRand< float >::BinarizeProbs(), kaldi::CuCompressedMatrixTestSign(), CuMatrixBase< float >::SizeInBytes(), RectifiedLinearComponent::StoreStats(), and kaldi::UnitTestCuMatrixHeaviside().

| void InvertElements | ( | ) |

invert the matrix by elements.

Definition at line 932 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), kaldi::TestCuMatrixCompObjfAndDeriv(), NnetEnsembleTrainer::TrainOneMinibatch(), kaldi::UnitTestCuMatrixInvertElements(), and kaldi::UnitTestCuMatrixObjfDeriv().

| bool IsUnit | ( | Real | tol = 0.001 | ) | const |

Definition at line 629 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::FrobeniusNorm(), OnlinePreconditioner::InitOrthonormalSpecial(), kaldi::UnitTestCuMatrixSymInvertPosDef(), and kaldi::UnitTestCuSpMatrixInvert().

|

private |

| void Log | ( | const CuMatrixBase< Real > & | src | ) |

Definition at line 2477 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), and CuMatrixBase< float >::SizeInBytes().

| void LogSoftMaxPerRow | ( | const CuMatrixBase< Real > & | src | ) |

LogSoftmax nonlinearity Y = LogSoftmax(X) : Yij = Xij - log(sum_k(e^Xik)), done to each row, with attention to avoiding overflow or underflow.

Supports in-place operation (i.e. this == &src).

Definition at line 1740 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLogSoftMaxPerRow(), LogSoftmaxComponent::Propagate(), CuMatrixBase< float >::SizeInBytes(), kaldi::TestCuMatrixLogSoftmax(), and kaldi::UnitTestCuLogSoftmax().

| void Lookup | ( | const std::vector< Int32Pair > & | indexes, |

| Real * | output | ||

| ) | const |

Definition at line 3370 of file cu-matrix.cc.

Referenced by NnetDiscriminativeUpdater::LatticeComputations(), CuMatrixBase< float >::operator()(), kaldi::TestCuMatrixLookup(), and kaldi::UnitTestCuMatrixLookup().

| void Lookup | ( | const CuArrayBase< Int32Pair > & | indexes, |

| Real * | output | ||

| ) | const |

Definition at line 3395 of file cu-matrix.cc.

|

inline |

Definition at line 755 of file cu-matrix.h.

Referenced by CuMatrixBase< float >::AddCols(), CuVectorBase< float >::AddColSumMat(), CuVectorBase< float >::AddDiagMat2(), CuVectorBase< float >::AddDiagMatMat(), CuMatrixBase< float >::AddDiagVecMat(), CuMatrixBase< float >::AddMat(), CuSpMatrix< Real >::AddMat2(), CuMatrixBase< float >::AddMatBlocks(), CuMatrixBase< float >::AddMatDiagVec(), CuMatrixBase< float >::AddMatMat(), CuMatrixBase< float >::AddMatMatElements(), CuMatrixBase< float >::AddMatSmat(), CuVectorBase< float >::AddMatVec(), CuMatrixBase< float >::AddRows(), CuVectorBase< float >::AddRowSumMat(), CuMatrixBase< float >::AddSmatMat(), GeneralMatrix::AddToMat(), CuMatrixBase< float >::AddToRows(), kaldi::cu::BackpropLstmNonlinearity(), CuMatrixBase< float >::Ceiling(), kaldi::cu::ComputeLstmNonlinearity(), kaldi::cu::Copy(), CuVectorBase< float >::CopyColFromMat(), CuMatrixBase< float >::CopyCols(), CuVectorBase< float >::CopyElements(), CuTpMatrix< Real >::CopyFromMat(), CuSpMatrix< Real >::CopyFromMat(), CuMatrixBase< float >::CopyFromMat(), CuMatrixBase< float >::CopyRows(), CuVectorBase< float >::CopyRowsFromMat(), VectorBase< float >::CopyRowsFromMat(), CuSparseMatrix< Real >::CopyToMat(), GeneralMatrix::CopyToMat(), CuMatrixBase< float >::DiffGroupPnorm(), CuMatrixBase< float >::DiffParametricRelu(), CuMatrixBase< float >::DiffSigmoid(), CuMatrixBase< float >::DiffTanh(), CuMatrixBase< float >::DivElements(), CuMatrixBase< float >::Exp(), CuMatrixBase< float >::ExpLimited(), CuMatrixBase< float >::ExpSpecial(), CuMatrixBase< float >::Floor(), CuMatrixBase< float >::GroupMax(), CuMatrixBase< float >::GroupMaxDeriv(), CuMatrixBase< float >::GroupPnorm(), CuMatrixBase< float >::Heaviside(), CuMatrixBase< float >::Log(), CuMatrixBase< float >::LogSoftMaxPerRow(), CuMatrixBase< float >::Max(), CuMatrixBase< float >::Min(), CuMatrixBase< float >::MulElements(), CuMatrixBase< float >::MulRows(), CuMatrixBase< float >::MulRowsGroupMat(), CuMatrixBase< float >::ParametricRelu(), CuMatrixBase< float >::Pow(), CuMatrixBase< float >::PowAbs(), CuRand< float >::RandGaussian(), kaldi::cu::Randomize(), CuRand< float >::RandUniform(), kaldi::cu::RegularizeL1(), CuMatrixBase< float >::SetMatMatDivMat(), CuMatrixBase< float >::Sigmoid(), CuMatrixBase< float >::SoftHinge(), CuMatrixBase< float >::SoftMaxPerRow(), kaldi::cu::Splice(), CuMatrixBase< float >::SymAddMat2(), CuMatrixBase< float >::Tanh(), kaldi::TraceMatMat(), and kaldi::TraceMatSmat().

|

inline |

Definition at line 758 of file cu-matrix.h.

| void Max | ( | const CuMatrixBase< Real > & | A | ) |

Do, elementwise, *this = max(*this, A).

Definition at line 715 of file cu-matrix.cc.

Referenced by kaldi::CuCompressedMatrixTestNonnegative(), kaldi::CuCompressedMatrixTestSymmetric(), main(), MaxpoolingComponent::Propagate(), SpliceMaxComponent::Propagate(), kaldi::TestCuMatrixMax(), kaldi::UnitTestCuMatrixMax(), and kaldi::UnitTestCuMatrixReduceMax().

| Real Max | ( | ) | const |

Definition at line 3033 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), and CuMatrixBase< float >::operator()().

| void Min | ( | const CuMatrixBase< Real > & | A | ) |

Do, elementwise, *this = min(*this, A).

Definition at line 740 of file cu-matrix.cc.

Referenced by kaldi::CuCompressedMatrixTestNonnegative(), kaldi::CuCompressedMatrixTestSymmetric(), main(), kaldi::TestCuMatrixMin(), kaldi::UnitTestCuMatrixMin(), and kaldi::UnitTestCuMatrixReduceMin().

| Real Min | ( | ) | const |

Definition at line 3054 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), and CuMatrixBase< float >::operator()().

| void MulColsVec | ( | const CuVectorBase< Real > & | scale | ) |

scale i'th column by scale[i]

Definition at line 765 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), BatchNormComponent::Backprop(), FixedScaleComponent::Backprop(), PerElementScaleComponent::Backprop(), Rescale::BackpropagateFnc(), ScaleAndOffsetComponent::BackpropInternal(), LstmNonlinearityComponent::ConsolidateMemory(), ModelCollapser::PreMultiplyAffineParameters(), BatchNormComponent::Propagate(), FixedScaleComponent::Propagate(), PerElementScaleComponent::Propagate(), Rescale::PropagateFnc(), ScaleAndOffsetComponent::PropagateInternal(), kaldi::UnitTestCuMatrixAddMatDiagVec(), and kaldi::UnitTestCuMatrixMulColsVec().

| void MulElements | ( | const CuMatrixBase< Real > & | A | ) |

Multiply two matrices elementwise: C = C .* A.

Definition at line 667 of file cu-matrix.cc.

Referenced by CuMatrixBase< float >::ApplyLog(), ElementwiseProductComponent::Backprop(), BackpropTruncationComponent::Backprop(), MaxpoolingComponent::Backprop(), SigmoidComponent::Backprop(), TanhComponent::Backprop(), PowerComponent::Backprop(), RectifiedLinearComponent::Backprop(), SoftHingeComponent::Backprop(), HiddenSoftmax::BackpropagateFnc(), Dropout::BackpropagateFnc(), ScaleAndOffsetComponent::BackpropInternal(), kaldi::nnet1::ComputeStdDev(), LstmNonlinearityComponent::ConsolidateMemory(), CuMatrixBase< float >::DiffSoftmaxPerRow(), Mse::Eval(), ElementwiseProductComponent::Propagate(), DropoutComponent::Propagate(), KlHmm::PropagateFnc(), LengthNormComponent::PropagateFnc(), Dropout::PropagateFnc(), ClipGradientComponent::RepairGradients(), NnetEnsembleTrainer::TrainOneMinibatch(), kaldi::UnitTestCuMatrixAddMatMatElements(), kaldi::UnitTestCuMatrixMulElements(), kaldi::nnet1::UnitTestLengthNorm(), AffineTransform::Update(), FramePoolingComponent::Update(), ConvolutionalComponent::Update(), Rescale::Update(), and NaturalGradientPerElementScaleComponent::Update().

| void MulRows | ( | const CuMatrixBase< Real > & | src, |

| const CuArrayBase< MatrixIndexT > & | indexes | ||

| ) |

Does for each row r, this.Row(r) *= alpha * src.row(indexes[r]), where '*=' is elementwise multiplication.

If indexes[r] < 0, does not add anything. src.NumCols() must equal this.NumCols()

Definition at line 2790 of file cu-matrix.cc.

Referenced by GeneralDropoutComponent::Backprop(), and GeneralDropoutComponent::Propagate().

| void MulRowsGroupMat | ( | const CuMatrixBase< Real > & | src | ) |

divide each row into src.NumCols() groups, and then scale i'th row's jth group of elements by src[i, j].