Keywords for search: natural gradient, naturalgradient, NG-SGD. More...

#include <nnet-precondition-online.h>

Public Member Functions | |

| OnlinePreconditioner () | |

| void | SetRank (int32 rank) |

| void | SetUpdatePeriod (int32 update_period) |

| void | SetNumSamplesHistory (BaseFloat num_samples_history) |

| void | SetAlpha (BaseFloat alpha) |

| void | TurnOnDebug () |

| BaseFloat | GetNumSamplesHistory () const |

| BaseFloat | GetAlpha () const |

| int32 | GetRank () const |

| int32 | GetUpdatePeriod () const |

| void | PreconditionDirections (CuMatrixBase< BaseFloat > *R, CuVectorBase< BaseFloat > *row_prod, BaseFloat *scale) |

| OnlinePreconditioner (const OnlinePreconditioner &other) | |

| OnlinePreconditioner & | operator= (const OnlinePreconditioner &other) |

Static Private Member Functions | |

| static void | InitOrthonormalSpecial (CuMatrixBase< BaseFloat > *R) |

| This function creates a matrix with orthonormal rows that is like the following matrix, except with each row normalized to have unit 2-norm: [ 1.1 0 1 0 1 0 0 1.1 0 1 0 1 ] The reason why the first element in each row is 1.1 and not 1, is for symmetry-breaking... More... | |

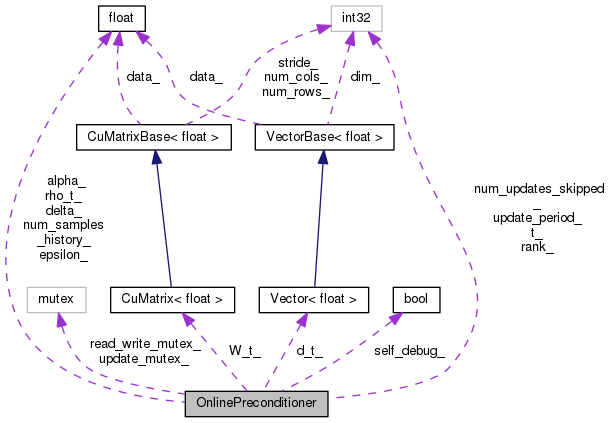

Private Attributes | |

| int32 | rank_ |

| int32 | update_period_ |

| BaseFloat | num_samples_history_ |

| BaseFloat | alpha_ |

| BaseFloat | epsilon_ |

| BaseFloat | delta_ |

| int32 | t_ |

| int32 | num_updates_skipped_ |

| bool | self_debug_ |

| CuMatrix< BaseFloat > | W_t_ |

| BaseFloat | rho_t_ |

| Vector< BaseFloat > | d_t_ |

| std::mutex | read_write_mutex_ |

| std::mutex | update_mutex_ |

Keywords for search: natural gradient, naturalgradient, NG-SGD.

This method is explained in the paper "Parallel training of DNNs with Natural Gradient and Parameter Averaging" by D. Povey, X. Zhang and S. Khudanpur, ICLR Workshop, 2015, where it is referred to as online NG-SGD. Note that the method exported from this header is just the core of the algorithm, and some outer-level parts of it are implemented in class NaturalGradientAffineComponent.

The rest of this extended comment describes the way we keep updated an estimate of the inverse of a scatter matrix, in an online way. This is the same as the estimation of one of the A or B quantities in the paper. This comment is slightly redundant with the paper- actually it precedes the paper- but we keep it in case it is useful in understanging our method.

We consider the problem of doing online estimation of a (scaled-identity plus low-rank) approximation of a Fisher matrix... since the Fisher matrix is a scatter of vector-valued derivatives and we will be given the derivatives (or at least terms in a factorization of the derivatives which need not concern us right now), we can just think of the present task as being the online accumulation of a (low-rank plus scaled-identity) approximation to a variance of a distribution with mean zero.

Later on we'll think about how to get easy access to the inverse of this approximate variance, which is what we really need.

Our approximation to the Fisher matrix (the scatter of derivatives) will be of the following form (and just think of this as an approximate variance matrix of some arbitrary quantities).

F_t =(def) R_t^T D_t R_t + I

(t is the minibatch index), where R_t is an R by D matrix with orthonormal rows (1 <= R < D is our chosen rank), D_t is a positive-definite diagonal matrix, and > 0. Suppose the dimension of F_t is D. Let the vectors whose variance we are approximating be provided in minibatches of size M (M can vary from iteration to iteration, but it won't vary in the normal case, so we omit the subscript t). The batch of gradients is given as X_t Re^{M D}, i.e. each row is one of the vectors whose scatter we're estimating. On the t'th iteration, define the scatter S_t of the input vectors X_t as:

S_t =(def) 1/N X_t^T X_t (eqn:St)

(where N is the minibatch size). Be careful not to confuse the rank R with with input X_t (we would typeface X_t in bold if this were not plain text, to make the distinction clearer). We want F_t to approach some kind of time-weighted average of the S_t quantities, to the extent permitted by the limitation of the rank R. We want the F_t quantities to stay "fresh" (since we'll be doing this in a SGD context and the parameters will be slowly changing). We use a constant 0 < < 1 to control the updating rate. Our update for R_t is based on the power method. Define the smoothed scatter

T_t =(def) S_t + (1-) F_t

we'll use this in place of the observed scatter S_t, to slow down the update. Defining

Y_t =(def) R_t T_t

which can be expanded as follows: Y_t = R_t ( S_t + (1-) F_t ) = R_t ( S_t + (1-) (R_t^T D_t R_t + I) ) = R_t ( S_t + (1-) (R_t^T D_t R_t + I) ) = R_t S_t + (1-) (D_t + I) R_t

It is useful to think of Y_t as having each of the top eigenvectors of the scatter scaled by the corresponding eigenvalue . We compute the following R by R matrix: Z_t =(def) Y_t Y_t^T and do the symmetric eigenvalue decomposition Z_t = U_t C_t U_t^T where C_t is diagonal and U_t orthogonal; the diagonal elements of C_t will be positive (since > 0, T_t is positive definite; since R_t has full row rank and T_t is positive definite, Y_t has full row rank; hence Z_t is positive definite). The diagonal elements of C_t can be thought of as corresponding to the squares of our current estimate of the top eigenvalues of the scatter matrix. [we should check that no element of C_t is <= 0.]

It is easy to show that C_t^{-0.5} U_t^T Z_t U_t C_t^{-0.5} = I, so (C_t^{-0.5} U_t^T Y_t) (Y_t^T U_t C_t^{-0.5}) = I. Define R_{t+1} =(def) C_t^{-0.5} U_t^T Y_t

and it's clear that R_{t+1} R_{t+1}^T = I. We will set D_{t+1} =(def) C_t^{0.5} - {t+1} I (eqn:dt1)

which ensures that for each row r of R_{t+1}, the variance of our scatter matrix F_{t+1} will be the square root of the corresponding diagonal element of C_t. This makes sense because, as we have pointed out, the diagonal elements of C_t can be thought of as corresponding to squared eigenvalues. But a proper treatment of this would require convergence analysis that would get quite complicated. We will choose {t+1} in order to ensure that tr(F_{t+1}) = tr(T_t).

For any t, tr(F_t) = D + tr(D_t) tr(T_t) = tr(S_t) + (1-) tr(F_t) = tr(S_t) + (1-) (D + tr(D_t)) Expanding out D_{t+1} from (eqn:dt1) in the expression for tr(F_{t+1}) below: tr(F_{t+1}) = D {t+1} + tr(D_{t+1}) tr(F_{t+1}) = D {t+1} + tr(C_t^{0.5} - {t+1} I) = (D - R) {t+1} + tr(C_t^{0.5}) and equating tr(F_{t+1}) with T_t (since F_{t+1} is supposed to be a low-rank approximation to T_t), we have tr(F_{t+1}) = tr(T_t) (D - R) {t+1} + tr(C_t^{0.5}) = tr(S_t) + (1-) (D + tr(D_t))

Solving for {t+1}, {t+1} = 1/(D - R) ( tr(S_t) + (1-)(D + tr(D_t)) - tr(C_t^{0.5})). (eqn:rhot1)

Note that it is technically possible that diagonal elements of of D_{t+1} may be negative, but we can still show that F_{t+1} is strictly positive definite if F_t was strictly positive definite.

If the quantities for which we are computing the Fisher matrix are all zero for some, reason, the sequence of F_t will geometrically approach zero, which would cause problems with inversion; to prevent this happening, after setting D_{t+1} and {t+1} as above, we floor {t+1} to a small value (like 1.0e-10).

OK, we have described the updating of R_t, D_t and . Next, we need to figure out how to efficiently multiply by the inverse of F_t. Our experience from working with the old preconditioning method was that it's best not to use the inverse of the Fisher matrix itself, but a version of the Fisher matrix that's smoothed with some constant times the identity. Below, ( is a configuration value, e.g. 4.0 seemed to work well). The following formula is designed to ensure that the smoothing varies proportionally with the scale of F_t:

G_t =(def) F_t + /D tr(F_t) I = R_t^T D_t R_t + ( + /D tr(F_t)) I = R_t^T D_t R_t + I where =(def) + /D tr(F_t) = (1+) + /D tr(D_t) (eqn:betat2)

Define {X}_t =(def) X_t G_t^{-1}. the factor of is inserted arbitrarily as it just happens to be convenient to put unit scale on X_t in the formula for {X}_t; it will anyway be canceled out in the next step. Then our final preconditioned minibatch of vectors is: {X}_t = {X}_t where = sqrt(tr(X_t X_t^T) / tr({X}_t {X}_t^T). The factor of ensures that {X}_t is scaled to have the same overall 2-norm as the input X_t. We found in previous versions of this method that this rescaling was helpful, as otherwise there are certain situations (e.g. at the start of training) where the preconditioned derivatives can get very large. Note that this rescaling introduces a small bias into the training, because now the scale applied to a given sample depends on that sample itself, albeit in an increasingly diluted way as the minibatch size gets large.

To efficiently compute G_t^{-1}, we will use the Woodbury matrix identity. Writing the Woodbury formula for the symmetric case, (A + U D U^T)^{-1} = A^{-1} - A^{-1} U (D^{-1} + U^T A^{-1} U)^{-1} U^T A^{-1} Substituting A = I, D = D_t and U = R_t^T, this becomes G_t^{-1} = 1/ I - 1/^2 R_t^T (D_t^{-1} + 1/ I)^{-1} R_t = 1/ (I - R_t^T E_t R_t) where E_t =(def) 1/ (D_t^{-1} + 1/ I)^{-1}, (eqn:etdef) so e_{tii} = 1/ * 1/(1/d_{tii} + 1/) (eqn:tii) = 1/(/d_{tii} + 1)

We would like an efficient-to-compute expression for {X}_t, without too many separate invocations of kernels on the GPU. {X}_t = X_t G_t^{-1} = X_t - X_t R_t^T E_t R_t For efficient operation on the GPU, we want to reduce the number of high-dimensional operations that we do (defining "high-dimension" as anything involving D or M, but not R, since R is likely small, such as 20). We define W_t =(def) E_t^{0.5} R_t. We will actually be storing W_t on the GPU rather than R_t, in order to reduce the number of operations on the GPU. We can now write:

{X}_t = X_t - X_t W_t^T W_t (eqn:pt2)

The following, which we'll compute on the GPU, are going to be useful in computing quantities like Z_t:

H_t =(def) X_t W_t^T (dim is N by R) J_t =(def) H_t^T X_t (dim is R by D) = W_t X_t^T X_t K_t =(def) J_t J_t^T (dim is R by R, symmetric).. transfer this to CPU. L_t =(def) H_t^T H_t (dim is R by R, symmetric).. transfer this to CPU. = W_t X_t^T X_t W_t^T Note: L_t may also be computed as L_t = J_t W_t^T which may be more efficient if D < N.

Note: after we have computed H_t we can directly compute {X}_t = X_t - H_t W_t

We need to determine how Y_t and Z_t relate to the quantities we just defined. First, we'll expand out H_t, J_t, K_t and L_t in terms of the more fundamental quantities. H_t = X_t R_t^T E_t^{0.5} J_t = E_t^{0.5} R_t X_t^T X_t K_t = E_t^{0.5} R_t X_t^T X_t X_t^T X_t R_t^T E_t^{0.5} L_t = E_t^{0.5} R_t X_t^T X_t R_t^T E_t^{0.5}

we wrote above that Y_t = R_t S_t + (1-) (D_t + I) R_t so Y_t = /N R_t X_t^T X_t + (1-) (D_t + I) R_t = /N E_t^{-0.5} J_t + (1-) (D_t + I) R_t (eqn:yt) We will expand Z_t using the expression for Y_t in the line above: Z_t = Y_t Y_t^T = (/N)^2 E_t^{-0.5} J_t J_t^T E_t^{-0.5} +(/N)(1-) E_t^{-0.5} J_t R_t^T (D_t + I) +(/N)(1-) (D_t + I) R_t J_t^T E_t^{-0.5} +(1-)^2 (D_t + I)^2 = (/N)^2 E_t^{-0.5} K_t E_t^{-0.5} +(/N)(1-) E_t^{-0.5} L_t E_t^{-0.5} (D_t + I) +(/N)(1-) (D_t + I) E_t^{-0.5} L_t E_t^{-0.5} +(1-)^2 (D_t + I)^2 (eqn:Zt) We compute Z_t on the CPU using the expression above, and then do the symmetric eigenvalue decomposition (also on the CPU): Z_t = U_t C_t U_t^T. and we make sure the eigenvalues are sorted from largest to smallest, for reasons that will be mentioned later.

Mathematically, no diagonal element of C_t can be less than (1-)^2 ^2, and since negative or zero elements of C_t would cause us a problem later, we floor C_t to this value. (see below regarding how we ensure R_{t+1} has orthonormal rows).

We will continue the discussion below regarding what we do with C_t and U_t. Next, we need to digress briefly and describe how to compute tr({X}_t {X}_t^T) and tr(X_t X_t^2), since these appear in expressions for (needed to produce the output {X}_t), and for {t+1}. It happens that we need, for purposes of appying "max_change" in the neural net code, the squared 2-norm of each row of the output {X}_t. In order to be able to compute , it's most convenient to compute this squared row-norm for each row of {X}_t, as a vector, to compute tr({X}_t {X}_t^2) from this vector as its sum, and to then work back to compute tr(X_t X_t^2) from the relation between {X}_t and X_t. We can then scale the row-norms we computed for {X}_t, so they apply to {X}_t.

For current purposes, you can imagine that we computed tr({X}_t {X}_t^T) directly. Using (from eqn:pt2) {X}_t = X_t - X_t W_t^T W_t, we can expand tr({X}_t {X}_t^T) as: tr({X}_t {X}_t^T) = tr(X_t X_t^T) + tr(X_t W_t^T W_t W_t^T W_t X_t^T)

tr(X_t X_t^T) = tr({X}_t {X}_t^T) - tr(L_t E_t) + 2 tr(L_t) and the above expression can be used to obtain tr(X_t X_t^2). We can then do <– sqrt(tr(X_t X_t^T) / tr({X}_t {X}_t^T)). (or one if the denominator is zero), and then {X}_t <– {X}_t We can then output the per-row squared-l2-norms of Q by scaling those we computed from P by ^2.

OK, the digression on how to compute and tr(X_t X_t^T) is over. We now return to the computation of R_{t+1}, W_{t+1}, {t+1}, D_{t+1} and E_{t+1}.

We found above in (eqn:rhot1) {t+1} = 1/(D - R) ( tr(S_t) + (1-)(D + tr(D_t)) - tr(C_t^{0.5})). Expanding out S_t from its definition in (eqn:St), {t+1} = 1/(D - R) (/N tr(X_t X_t^T) + (1-)(D + tr(D_t)) - tr(C_t^{0.5})). We can compute this directly as all the quantities involved are already known or easy to compute. Next, from (eqn:dt1), we compute D_{t+1} = C_t^{0.5} - {t+1} I At this point if {t+1} is smaller than some small value , e.g. 1.0e-10, we set it to ; as mentioned, we do this to stop F_t approaching zero if all inputs are zero. Next, if any diagonal element D_{t+1,i,i} has absolute value less than , we set it to +. This is to ensure that diagonal elements of E are never zero, which would cause problems.

Next, we compute (from eqn:betat2, eqn:etdef, eqn:tii), {t+1} = {t+1} (1+) + /D tr(D_{t+1}) E_{t+1} = 1/{t+1} (D_{t+1}^{-1} + 1/{t+1} I)^{-1}, i.e.: e_{tii} = 1/({t+1}/d_{t+1,ii} + 1)

We'll want to store D_{t+1}. We next want to compute W_{t+1}.

Before computing W_{t+1}, we need to find an expression for R_{t+1} = C_t^{-0.5} U_t^T Y_t Expanding out Y_t using the expression in (eqn:yt), R_{t+1} = C_t^{-0.5} U_t^T (/N E_t^{-0.5} J_t + (1-) (D_t + I) R_t) = (/N C_t^{-0.5} U_t^T E_t^{-0.5}) J_t +((1-) C_t^{-0.5} U_t^T (D_t + I) E_t^{-0.5}) W_t

What we actually want is W_{t+1} = E_{t+1}^{0.5} R_{t+1}: W_{t+1} = (/N E_{t+1}^{0.5} C_t^{-0.5} U_t^T E_t^{-0.5}) J_t +((1-) E_{t+1}^{0.5} C_t^{-0.5} U_t^T (D_t + I) E_t^{-0.5}) W_t and to minimize the number of matrix-matrix multiplies we can factorize this as: W_{t+1} = A_t B_t A_t = (/N) E_{t+1}^{0.5} C_t^{-0.5} U_t^T E_t^{-0.5} B_t = J_t + (1-)/(/N) (D_t + I) W_t [note: we use the fact that (D_t + I) and E_t^{-0.5} commute because they are diagonal].

A_t is computed on the CPU and transferred from there to the GPU, B_t is computed on the PGU, and the multiplication of A_t with B_t is done on the GPU.

Keeping R_t orthogonal *

Our method requires the R_t matrices to be orthogonal (which we define to mean that R_t R_t^T = I). If roundoff error causes this equality to be significantly violated, it could cause a problem for the stability of our method. We now address our method for making sure that the R_t values stay orthogonal. We do this in the algorithm described above, after creating W_{t+1}. This extra step is only executed if the condition number of C_t (i.e. the ratio of its largest to smallest diagonal element) exceeds a specified threshold, such as 1.0e+06 [this is tested before applying the floor to C_t]. The threshold was determined empirically by finding the largest value needed to ensure a certain level of orthogonality in R_{t+1}. For purposes of the present discussion, since R_{t+1} is not actually stored, define it as E_{t+1}^{-0.5} W_{t+1}. Define the following (and we will just use t instead of t+1 below, as all quantities have the same subscript):

O_t =(def) R_t R_t^T = E_t^{-0.5} W_t W_t^T E_t^{-0.5}

(and we would compute this by computing W_t W_t^T on the GPU, transferring it to the CPU, and doing the rest there). If O_t is not sufficiently close to the unit matrix, we can re-orthogonalize as follows: Do the Cholesky decomposition O_t = C C^T Clearly C^{-1} O_t C^{-T} = I, so if we correct R_t with: R_t <– C^{-1} R_t we can ensure orthogonality. If R_t's first k rows are orthogonal, this transform will not affect them, because of its lower-triangular structure... this is good because (thanks to the eigenvalue sorting), the larger eigenvectors are first and it is more critical to keep them pointing in the same direction. Any loss of orthogonality will be dealt with by modifying the smaller eigenvectors. As a modification to W_t, this would be: W_t <– (E_t^{0.5} C^{-1} E_t^{-0.5}) W_t, and the matrix in parentheses is computed on the CPU, transferred to the GPU, and the multiplication is done there.

Initialization *

Now, a note on what we do on time t = 0, i.e. for the first minibatch. We initialize X_0 to the top R eigenvectors of 1/N X_0 X_0^T, where N is the minibatch size (num-rows of R0). If L is the corresponding RxR diagonal matrix of eigenvalues, then we will set D_0 = L - I. We set to ensure that tr(F_0) = 1/N tr(X_0 X_0^T), tr(D_0) - D = 1/N tr(X_0 X_0^T), tr(L) + R - D = 1/N tr(X_0 X_0^T) = (1/N tr(X_0 X_0^T) - tr(L)) / (D - R)

We then floor to (e.g. 1.0e-10) and also floor the diagonal elements of D_0 to ; this ensures that we won't crash for zero inputs.

A note on multi-threading. This technique was really designed for use with a GPU, where we won't have multi-threading, but we want it to work also on a CPU, where we may have multiple worker threads. Our approach is as follows (we do this when we're about to start updating the parameters R_t, D_t, and derived quantities):

For time t > 0 (where the matrices are already initialized), before starting the part of the computation that updates the parameters (R_t, D_t, and derived quantities), we try to lock a mutex that guards the OnlinePreconditioner. If we can lock it right away, we go ahead and do the update, but if not, we just abandon the attempt to update those quantities.

We will have another mutex to ensure that when we access quantities like W_t, they are all "in sync" (and we don't access them while they are being written by another thread). This mutex will only be locked for short periods of time.

Note: it might be a good idea to make sure that the R_t still retain orthonormal rows even in the presence of roundoff, without errors accumulating. My instinct is that this isn't going to be a problem.

Definition at line 413 of file nnet-precondition-online.h.

Definition at line 27 of file nnet-precondition-online.cc.

Referenced by OnlinePreconditioner::GetUpdatePeriod().

|

explicit |

Definition at line 595 of file nnet-precondition-online.cc.

|

private |

Definition at line 577 of file nnet-precondition-online.cc.

References VectorBase< Real >::ApplyPow(), VectorBase< Real >::CopyFromVec(), rnnlm::d, VectorBase< Real >::Data(), VectorBase< Real >::Dim(), rnnlm::i, and VectorBase< Real >::InvertElements().

Referenced by OnlinePreconditioner::ComputeWt1(), OnlinePreconditioner::GetUpdatePeriod(), OnlinePreconditioner::PreconditionDirectionsInternal(), OnlinePreconditioner::ReorthogonalizeXt1(), and OnlinePreconditioner::SelfTest().

|

private |

Definition at line 501 of file nnet-precondition-online.cc.

References CuMatrixBase< Real >::AddDiagVecMat(), CuMatrixBase< Real >::AddMatMat(), OnlinePreconditioner::alpha_, OnlinePreconditioner::ComputeEt(), VectorBase< Real >::Dim(), OnlinePreconditioner::Eta(), rnnlm::i, VectorBase< Real >::InvertElements(), rnnlm::j, KALDI_ASSERT, kaldi::kNoTrans, kaldi::kTrans, kaldi::kUndefined, CuMatrixBase< Real >::NumCols(), and VectorBase< Real >::Sum().

Referenced by OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::PreconditionDirectionsInternal().

|

private |

Definition at line 546 of file nnet-precondition-online.cc.

References VectorBase< Real >::Add(), VectorBase< Real >::Dim(), OnlinePreconditioner::Eta(), rnnlm::i, and rnnlm::j.

Referenced by OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::PreconditionDirectionsInternal().

Definition at line 492 of file nnet-precondition-online.cc.

References KALDI_ASSERT, and OnlinePreconditioner::num_samples_history_.

Referenced by OnlinePreconditioner::ComputeWt1(), OnlinePreconditioner::ComputeZt(), OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::PreconditionDirectionsInternal().

|

inline |

Definition at line 424 of file nnet-precondition-online.h.

References OnlinePreconditioner::alpha_.

|

inline |

Definition at line 423 of file nnet-precondition-online.h.

References OnlinePreconditioner::num_samples_history_.

|

inline |

|

inline |

Definition at line 426 of file nnet-precondition-online.h.

References OnlinePreconditioner::ComputeEt(), OnlinePreconditioner::ComputeWt1(), OnlinePreconditioner::ComputeZt(), OnlinePreconditioner::Eta(), OnlinePreconditioner::Init(), OnlinePreconditioner::InitDefault(), OnlinePreconditioner::InitOrthonormalSpecial(), OnlinePreconditioner::OnlinePreconditioner(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirections(), OnlinePreconditioner::PreconditionDirectionsInternal(), OnlinePreconditioner::ReorthogonalizeXt1(), OnlinePreconditioner::SelfTest(), and OnlinePreconditioner::update_period_.

|

private |

Definition at line 123 of file nnet-precondition-online.cc.

References OnlinePreconditioner::d_t_, rnnlm::i, OnlinePreconditioner::InitDefault(), kaldi::kUndefined, CuMatrixBase< Real >::NumCols(), CuMatrixBase< Real >::NumRows(), OnlinePreconditioner::PreconditionDirections(), OnlinePreconditioner::rank_, OnlinePreconditioner::rho_t_, OnlinePreconditioner::t_, and OnlinePreconditioner::W_t_.

Referenced by OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::PreconditionDirections().

|

private |

Definition at line 75 of file nnet-precondition-online.cc.

References OnlinePreconditioner::alpha_, OnlinePreconditioner::d_t_, OnlinePreconditioner::delta_, OnlinePreconditioner::epsilon_, OnlinePreconditioner::InitOrthonormalSpecial(), KALDI_ASSERT, KALDI_WARN, kaldi::kUndefined, OnlinePreconditioner::num_samples_history_, OnlinePreconditioner::rank_, OnlinePreconditioner::rho_t_, OnlinePreconditioner::t_, and OnlinePreconditioner::W_t_.

Referenced by OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::Init().

|

staticprivate |

This function creates a matrix with orthonormal rows that is like the following matrix, except with each row normalized to have unit 2-norm: [ 1.1 0 1 0 1 0 0 1.1 0 1 0 1 ] The reason why the first element in each row is 1.1 and not 1, is for symmetry-breaking...

we don't want any weighted sum of all these rows to be all ones, because the derivative in that direction can be zero in some architectures and it causes us to have to do an inefficient CPU-based renormalization.

Definition at line 45 of file nnet-precondition-online.cc.

References CuMatrixBase< Real >::AddElements(), CuMatrixBase< Real >::AddMatMat(), rnnlm::i, CuMatrixBase< Real >::IsUnit(), KALDI_ASSERT, kaldi::kNoTrans, kaldi::kTrans, CuMatrixBase< Real >::NumCols(), CuMatrixBase< Real >::NumRows(), and CuMatrixBase< Real >::SetZero().

Referenced by OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::InitDefault().

| OnlinePreconditioner & operator= | ( | const OnlinePreconditioner & | other | ) |

Definition at line 605 of file nnet-precondition-online.cc.

References OnlinePreconditioner::alpha_, OnlinePreconditioner::d_t_, OnlinePreconditioner::delta_, OnlinePreconditioner::epsilon_, OnlinePreconditioner::num_samples_history_, OnlinePreconditioner::rank_, OnlinePreconditioner::rho_t_, OnlinePreconditioner::self_debug_, OnlinePreconditioner::t_, OnlinePreconditioner::update_period_, and OnlinePreconditioner::W_t_.

Referenced by OnlinePreconditioner::GetUpdatePeriod().

| void PreconditionDirections | ( | CuMatrixBase< BaseFloat > * | R, |

| CuVectorBase< BaseFloat > * | row_prod, | ||

| BaseFloat * | scale | ||

| ) |

Definition at line 145 of file nnet-precondition-online.cc.

References CuVectorBase< Real >::AddDiagMat2(), OnlinePreconditioner::d_t_, OnlinePreconditioner::Init(), kaldi::kNoTrans, CuMatrixBase< Real >::NumCols(), CuMatrixBase< Real >::NumRows(), OnlinePreconditioner::PreconditionDirectionsInternal(), CuMatrixBase< Real >::Range(), OnlinePreconditioner::read_write_mutex_, OnlinePreconditioner::rho_t_, OnlinePreconditioner::t_, and OnlinePreconditioner::W_t_.

Referenced by OnlinePreconditioner::GetUpdatePeriod(), OnlinePreconditioner::Init(), kaldi::nnet2::UnitTestPreconditionDirectionsOnline(), and AffineComponentPreconditionedOnline::Update().

|

private |

Definition at line 300 of file nnet-precondition-online.cc.

References VectorBase< Real >::Add(), CuVectorBase< Real >::AddDiagMat2(), CuMatrixBase< Real >::AddMatMat(), OnlinePreconditioner::alpha_, VectorBase< Real >::ApplyFloor(), VectorBase< Real >::ApplyPow(), kaldi::ApproxEqual(), OnlinePreconditioner::ComputeEt(), OnlinePreconditioner::ComputeWt1(), OnlinePreconditioner::ComputeZt(), MatrixBase< Real >::CopyLowerToUpper(), OnlinePreconditioner::d_t_, OnlinePreconditioner::delta_, SpMatrix< Real >::Eig(), OnlinePreconditioner::epsilon_, OnlinePreconditioner::Eta(), rnnlm::i, KALDI_ASSERT, KALDI_VLOG, KALDI_WARN, kaldi::kNoTrans, kaldi::kTrans, VectorBase< Real >::Max(), OnlinePreconditioner::num_updates_skipped_, CuMatrixBase< Real >::NumCols(), CuMatrixBase< Real >::NumRows(), OnlinePreconditioner::rank_, OnlinePreconditioner::read_write_mutex_, OnlinePreconditioner::ReorthogonalizeXt1(), OnlinePreconditioner::rho_t_, PackedMatrix< Real >::Scale(), VectorBase< Real >::Scale(), OnlinePreconditioner::self_debug_, OnlinePreconditioner::SelfTest(), kaldi::SortSvd(), CuVectorBase< Real >::Sum(), VectorBase< Real >::Sum(), OnlinePreconditioner::t_, SpMatrix< Real >::Trace(), kaldi::TraceMatMat(), OnlinePreconditioner::update_mutex_, OnlinePreconditioner::update_period_, and OnlinePreconditioner::W_t_.

Referenced by OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::PreconditionDirections().

|

private |

Definition at line 184 of file nnet-precondition-online.cc.

References CuMatrixBase< Real >::AddMatMat(), OnlinePreconditioner::alpha_, TpMatrix< Real >::Cholesky(), OnlinePreconditioner::ComputeEt(), CuMatrixBase< Real >::CopyFromMat(), MatrixBase< Real >::CopyFromTp(), rnnlm::i, TpMatrix< Real >::Invert(), SpMatrix< Real >::IsUnit(), rnnlm::j, KALDI_ERR, KALDI_WARN, kaldi::kNoTrans, kaldi::kTakeLower, kaldi::kUndefined, PackedMatrix< Real >::Max(), CuMatrixBase< Real >::MulRowsVec(), CuMatrixBase< Real >::NumCols(), CuMatrixBase< Real >::NumRows(), MatrixBase< Real >::OrthogonalizeRows(), OnlinePreconditioner::self_debug_, VectorBase< Real >::Sum(), and CuMatrixBase< Real >::SymAddMat2().

Referenced by OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::PreconditionDirectionsInternal().

|

private |

Definition at line 255 of file nnet-precondition-online.cc.

References CuSpMatrix< Real >::AddMat2(), OnlinePreconditioner::alpha_, OnlinePreconditioner::ComputeEt(), OnlinePreconditioner::d_t_, OnlinePreconditioner::delta_, OnlinePreconditioner::epsilon_, rnnlm::i, SpMatrix< Real >::IsUnit(), rnnlm::j, KALDI_ASSERT, KALDI_WARN, kaldi::kNoTrans, kaldi::kUndefined, OnlinePreconditioner::rho_t_, and OnlinePreconditioner::W_t_.

Referenced by OnlinePreconditioner::GetUpdatePeriod(), and OnlinePreconditioner::PreconditionDirectionsInternal().

| void SetAlpha | ( | BaseFloat | alpha | ) |

Definition at line 634 of file nnet-precondition-online.cc.

References OnlinePreconditioner::alpha_, and KALDI_ASSERT.

| void SetNumSamplesHistory | ( | BaseFloat | num_samples_history | ) |

Definition at line 629 of file nnet-precondition-online.cc.

References KALDI_ASSERT, and OnlinePreconditioner::num_samples_history_.

| void SetRank | ( | int32 | rank | ) |

Definition at line 621 of file nnet-precondition-online.cc.

References KALDI_ASSERT, and OnlinePreconditioner::rank_.

Referenced by kaldi::nnet2::UnitTestPreconditionDirectionsOnline().

| void SetUpdatePeriod | ( | int32 | update_period | ) |

Definition at line 625 of file nnet-precondition-online.cc.

References KALDI_ASSERT, and OnlinePreconditioner::update_period_.

|

inline |

Definition at line 422 of file nnet-precondition-online.h.

References OnlinePreconditioner::self_debug_.

Referenced by kaldi::nnet2::UnitTestPreconditionDirectionsOnline().

|

private |

Definition at line 531 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::ComputeWt1(), OnlinePreconditioner::GetAlpha(), OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirectionsInternal(), OnlinePreconditioner::ReorthogonalizeXt1(), OnlinePreconditioner::SelfTest(), and OnlinePreconditioner::SetAlpha().

Definition at line 559 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::Init(), OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirections(), OnlinePreconditioner::PreconditionDirectionsInternal(), and OnlinePreconditioner::SelfTest().

|

private |

Definition at line 543 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirectionsInternal(), and OnlinePreconditioner::SelfTest().

|

private |

Definition at line 537 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirectionsInternal(), and OnlinePreconditioner::SelfTest().

|

private |

Definition at line 527 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::Eta(), OnlinePreconditioner::GetNumSamplesHistory(), OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), and OnlinePreconditioner::SetNumSamplesHistory().

|

private |

Definition at line 552 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::PreconditionDirectionsInternal().

|

private |

Definition at line 516 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::GetRank(), OnlinePreconditioner::Init(), OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirectionsInternal(), and OnlinePreconditioner::SetRank().

|

private |

Definition at line 563 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::PreconditionDirections(), and OnlinePreconditioner::PreconditionDirectionsInternal().

|

private |

Definition at line 558 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::Init(), OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirections(), OnlinePreconditioner::PreconditionDirectionsInternal(), and OnlinePreconditioner::SelfTest().

|

private |

Definition at line 555 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirectionsInternal(), OnlinePreconditioner::ReorthogonalizeXt1(), and OnlinePreconditioner::TurnOnDebug().

|

private |

Definition at line 546 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::Init(), OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirections(), and OnlinePreconditioner::PreconditionDirectionsInternal().

|

private |

Definition at line 567 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::PreconditionDirectionsInternal().

|

private |

Definition at line 521 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::GetUpdatePeriod(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirectionsInternal(), and OnlinePreconditioner::SetUpdatePeriod().

Definition at line 557 of file nnet-precondition-online.h.

Referenced by OnlinePreconditioner::Init(), OnlinePreconditioner::InitDefault(), OnlinePreconditioner::operator=(), OnlinePreconditioner::PreconditionDirections(), OnlinePreconditioner::PreconditionDirectionsInternal(), and OnlinePreconditioner::SelfTest().